Security has long been a worry for the Internet of Things projects, and for many organizations with active or planned IoT deployments, security concerns have hampered digital ambitions. By implementing IoT security best practices, however, risk can be minimized.

Fortunately, IoT security best practices can help organizations reduce the risks facing their deployments and broader digital transformation initiatives. These same best practices can also reduce legal liability and protect an organization’s reputation.

Technological fragmentation is not just one of the biggest barriers to IoT adoption, but it also complicates the goal of securing connected devices and related services. With IoT-related cyberattacks on the rise, organizations must become more adept at managing cyber-risk or face potential reputational and legal consequences. This article summarizes best practices for enterprise and industrial IoT projects.

Key takeaways from this article include the following:

- Data security remains a central technology hurdle related to IoT deployments.

- IoT security best practices also can help organizations curb the risk of broader digital transformation initiatives.

- Securing IoT projects requires a comprehensive view that encompasses the entire life cycle of connected devices and relevant supply chains.

Fragmentation and security have long been two of the most significant barriers to the Internet of Things adoption. The two challenges are also closely related.

Despite the Internet of Things (IoT) moniker, which implies a synthesis of connected devices, IoT technologies vary considerably based on their intended use. Organizations deploying IoT thus rely on an array of connectivity types, standards and hardware. As a result, even a simple IoT device can pose many security vulnerabilities, including weak authentication, insecure cloud integration, and outdated firmware and software.

For many organizations with active or planned IoT deployments, security concerns have hampered digital ambitions. An IoT World Today August 2020 survey revealed data security as the top technology hurdle for IoT deployments, selected by 46% of respondents.

Fortunately, IoT security best practices can help organizations reduce the risks facing their deployments and broader digital transformation initiatives. These same best practices can also reduce legal liability and protect an organization’s reputation.

But to be effective, an IoT-focused security strategy requires a broad view that encompasses the entire life cycle of an organization’s connected devices and projects in addition to relevant supply chains.

Know What You Have and What You Need

Asset management is a cornerstone of effective cyber defence. Organizations should identify which processes and systems need protection. They should also strive to assess the risk cyber attacks pose to assets and their broader operations.

In terms of enterprise and industrial IoT deployments, asset awareness is frequently spotty. It can be challenging given the array of industry verticals and the lack of comprehensive tools to track assets across those verticals. But asset awareness also demands a contextual understanding of the computing environment, including the interplay among devices, personnel, data and systems, as the National Institute of Standards and Technology (NIST) has observed.

There are two fundamental questions when creating an asset inventory: What is on my network? And what are these assets doing on my network?

Answering the latter requires tracking endpoints’ behaviours and their intended purpose from a business or operational perspective. From a networking perspective, asset management should involve more than counting networking nodes; it should focus on data protection and building intrinsic security into business processes.

Relevant considerations include the following:

- Compliance with relevant security and privacy laws and standards.

- Interval of security assessments.

- Optimal access of personnel to facilities, information and technology, whether remote or in-person.

- Data protection for sensitive information, including strong encryption for data at rest and data in transit.

- Degree of security automation versus manual controls, as well as physical security controls to ensure worker safety.

IoT device makers and application developers also should implement a vulnerability disclosure program. Bug bounty programs are another option that should include public contact information for security researchers and plans for responding to disclosed vulnerabilities.

Organizations that have accurately assessed current cybersecurity readiness need to set relevant goals and create a comprehensive governance program to manage and enforce operational and regulatory policies and requirements. Governance programs also ensure that appropriate security controls are in place. Organizations need to have a plan to implement controls and determine accountability for that enforcement. Another consideration is determining when security policies need to be revised.

An effective governance plan is vital for engineering security into architecture and processes, as well as for safeguarding legacy devices with relatively weak security controls. Devising an effective risk management strategy for enterprise and industrial IoT devices is a complex endeavour, potentially involving a series of stakeholders and entities. Organizations that find it difficult to assess the cybersecurity of their IoT project should consider third-party assessments.

Many tools are available to help organizations evaluate cyber-risk and defences. These include the vulnerability database and the Security and Privacy Controls for Information Systems and Organizations document from the National Institute of Standards and Technology. Another resource is the list of 20 Critical Security Controls for Effective Cyber Defense. In terms of studying the threat landscape, the MITRE ATT&CK is one of the most popular frameworks for adversary tactics and techniques.

At this stage of the process, another vital consideration is the degree of cybersecurity savviness and support within your business. Three out of ten organizations deploying IoT cite lack of support for cybersecurity as a hurdle, according to August 2020 research from IoT World Today. Security awareness is also frequently a challenge. Many cyberattacks against organizations — including those with an IoT element — involve phishing, like the 2015 attack against Ukraine’s electric grid.

IoT Security Best Practices

Internet of Things projects demands a secure foundation. That starts with asset awareness and extends into responding to real and simulated cyberattacks.

Step 1: Know what you have.

Building an IoT security program starts with achieving a comprehensive understanding of which systems need to be protected.

Step 2: Deploy safeguards.

Shielding devices from cyber-risk requires a thorough approach. This step involves cyber-hygiene, effective asset control and the use of other security controls.

Step 3: Identify threats

Spotting anomalies can help mitigate attacks. Defenders should hone their skills through wargaming.

Step 4: Respond effectively.

Cyberattacks are inevitable but should provide feedback that feeds back to step 1.

Exploiting human gullibility is one of the most common cybercriminal strategies. While cybersecurity training can help individuals recognize suspected malicious activities, such programs tend not to be entirely effective. “It only takes one user and one-click to introduce an exploit into a network,” wrote Forrester analyst Chase Cunningham in the book “Cyber Warfare.” Recent studies have found that, even after receiving cybersecurity training, employees continue to click on phishing links about 3% of the time.

Security teams should work to earn the support of colleagues, while also factoring in the human element, according to David Coher, former head of reliability and cybersecurity for a major electric utility. “You can do what you can in terms of educating folks, whether it’s as a company IT department or as a consumer product manufacturer,” he said. But it is essential to put controls in place that can withstand user error and occasionally sloppy cybersecurity hygiene.

At the same time, organizations should also look to pool cybersecurity expertise inside and outside the business. “Designing the controls that are necessary to withstand user error requires understanding what users do and why they do it,” Coher said. “That means pulling together users from throughout your organization’s user chain — internal and external, vendors and customers, and counterparts.”

Those counterparts are easier to engage in some industries than others. Utilities, for example, have a strong track record in this regard, because of the limited market competition between them. Collaboration “can be more challenging in other industries, but no less necessary,” Coher added.

Deploy Appropriate Safeguards

Protecting an organization from cyberattacks demands a clear framework that is sensitive to business needs. While regulated industries are obligated to comply with specific cybersecurity-related requirements, consumer-facing organizations tend to have more generic requirements for privacy protections, data breach notifications and so forth. That said, all types of organizations deploying IoT have leeway in selecting a guiding philosophy for their cybersecurity efforts.

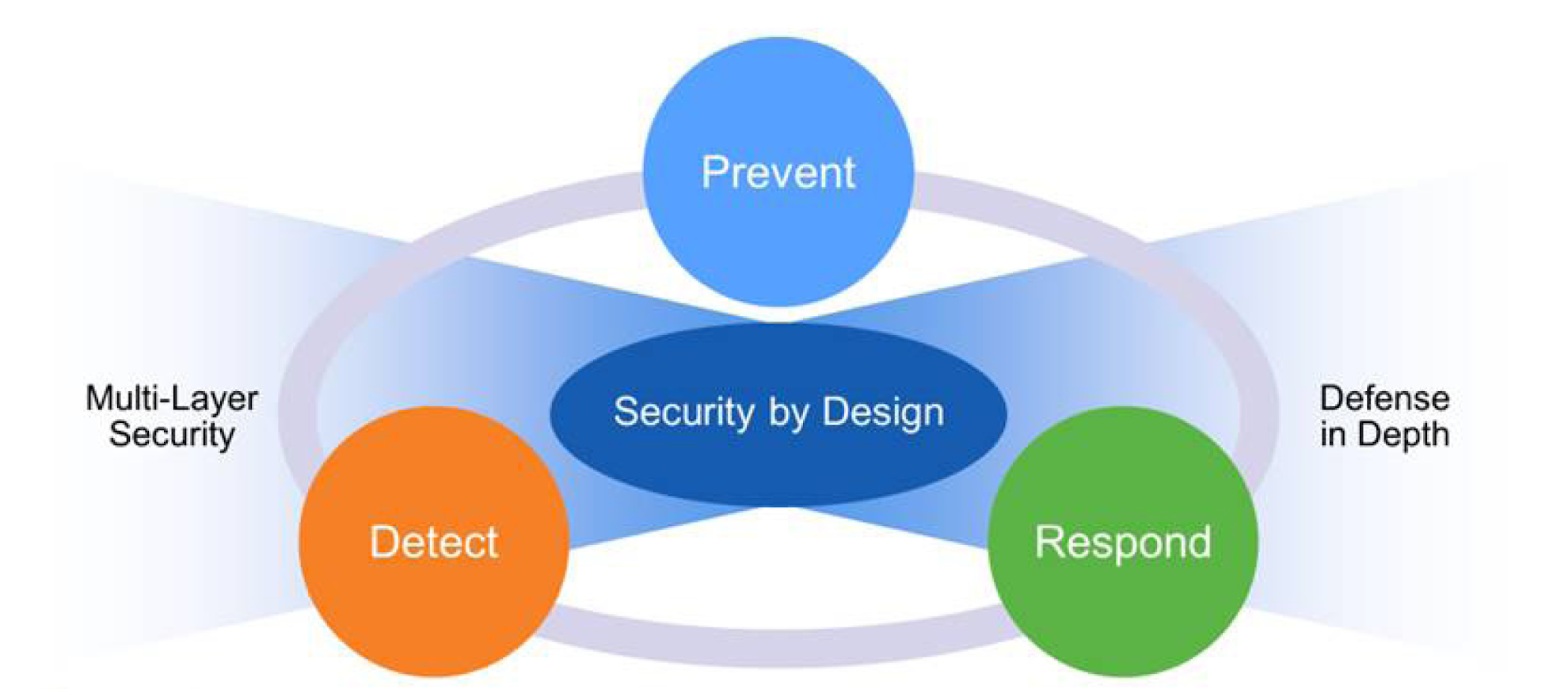

A basic security principle is to minimize networked or vulnerable systems’ attack surface — for instance, closing unused network ports and eliminating IoT device communication over the open internet. Generally speaking, building security into the architecture of IoT deployments and reducing attackers’ options to sabotage a system is more reliable than adding layers of defence to an unsecured architecture. Organizations deploying IoT projects should consider intrinsic security functionality such as embedded processors with cryptographic support.

But it is not practical to remove all risk from an IT system. For that reason, one of the most popular options is defence-in-depth, a military-rooted concept espousing the use of multiple layers of security. The basic idea is that if one countermeasure fails, additional security layers are available.

While the core principle of implementing multiple layers of security remains popular, defence in depth is also tied to the concept of perimeter-based defence, which is increasingly falling out of favour. “The defence-in-depth approach to cyber defence was formulated on the basis that everything outside of an organization’s perimeter should be considered ‘untrusted’ while everything internal should be inherently ‘trusted,’” said Andrew Rafla, a Deloitte Risk & Financial Advisory principal. “Organizations would layer a set of boundary security controls such that anyone trying to access the trusted side from the untrusted side had to traverse a set of detection and prevention controls to gain access to the internal network.”

Several trends have chipped away at the perimeter-based model. As a result, “modern enterprises no longer have defined perimeters,” Rafla said. “Gone are the days of inherently trusting any connection based on where the source originates.” Trends ranging from the proliferation of IoT devices and mobile applications to the popularity of cloud computing have fueled interest in cybersecurity models such as zero trust. “At its core, zero trust commits to ‘never trusting, always verifying’ as it relates to access control,” Rafla said. “Within the context of zero trusts, security boundaries are created at a lower level in the stack, and risk-based access control decisions are made based on contextual information of the user, device, workload or network attempting to gain access.”

Zero trust’s roots stretch back to the 1970s when a handful of computer scientists theorized on the most effective access control methods for networks. “Every program and every privileged user of the system should operate using the least amount of privilege necessary to complete the job,” one of those researchers, Jerome Saltzer, concluded in 1974.

While the concept of least privilege sought to limit trust among internal computing network users, zero trusts extend the principle to devices, networks, workloads and external users. The recent surge in remote working has accelerated interest in the zero-trust model. “Many businesses have changed their paradigm for security as a result of COVID-19,” said Jason Haward-Grau, a leader in KPMG’s cybersecurity practice. “Many organizations are experiencing a surge to the cloud because businesses have concluded they cannot rely on a physically domiciled system in a set location.”

Based on data from Deloitte, 37.4% of businesses accelerated their zero trust adoption plans in response to the pandemic. In contrast, more than one-third, or 35.2%, of those embracing zero trusts stated that the pandemic had not changed the speed of their organization’s zero-trust adoption.

“I suspect that many of the respondents that said their organization’s zero-trust adoption efforts were unchanged by the pandemic were already embracing zero trusts and were continuing with efforts as planned,” Rafla said. “In many cases, the need to support a completely remote workforce in a secure and scalable way has provided a tangible use case to start pursuing zero-trust adoption.”

A growing number of organizations are beginning to blend aspects of zero trust and traditional perimeter-based controls through a model known as secure access service edge (SASE), according to Rafla. “In this model, traditional perimeter-based controls of the defence-in-depth approach are converged and delivered through a cloud-based subscription service,” he said. “This provides a more consistent, resilient, scalable and seamless user experience regardless of where the target application a user is trying to access may be hosted. User access can be tightly controlled, and all traffic passes through multiple layers of cloud-based detection and prevention controls.”

Regardless of the framework, organizations should have policies in place for access control and identity management, especially for passwords. As Forrester’s Cunningham noted in “Cyber Warfare,” the password is “the single most prolific means of authentication for enterprises, users, and almost any system on the planet” — is the lynchpin of failed security in cyberspace. Almost everything uses a password at some stage.” Numerous password repositories have been breached, and passwords are frequently recycled, making the password a common security weakness for user accounts as well as IoT devices.

A significant number of consumer-grade IoT devices have also had their default passwords posted online. Weak passwords used in IoT devices also fueled the growth of the Mirai botnet, which led to widespread internet outages in 2016. More recently, unsecured passwords on IoT devices in enterprise settings have reportedly attracted state-sponsored actors’ attention.

IoT devices and related systems also need an effective mechanism for device management, including tasks such as patching, connectivity management, device logging, device configuration, software and firmware updates and device provisioning. Device management capabilities also extend to access control modifications and include remediation of compromised devices. It is vital to ensure that device management processes themselves are secure and that a system is in place for verifying the integrity of software updates, which should be regular and not interfere with device functionality.

Organizations must additionally address the life span of devices and the cadence of software updates. Many environments allow IT pros to identify a specific end-of-life period and remove or replace expired hardware. In such cases, there should be a plan for device disposal or transfer of ownership. In other contexts, such as in industrial environments, legacy workstations don’t have a defined expiration date and run out-of-date software. These systems should be segmented on the network. Often, such industrial systems cannot be easily patched like IT systems are, requiring security professionals to perform a comprehensive security audit on the system before taking additional steps.

Identify Threats and Anomalies

In recent years, attacks have become so common that the cybersecurity community has shifted its approach from preventing breaches from assuming a breach has already happened. The threat landscape has evolved to the point that cyberattacks against most organizations are inevitable.

“You hear it everywhere: It’s a matter of when, not if, something happens,” said Dan Frank, a principal at Deloitte specializing in privacy and data protection. Matters have only become more precarious in 2020. The FBI has reported a three- to four-fold increase in cybersecurity complaints after the advent of COVID-19.

Advanced defenders have taken a more aggressive stance known as threat hunting, which focuses on proactively identifying breaches. Another popular strategy is to study adversary behaviour and tactics to classify attack types. Models such as the MITRE ATT&CK framework and the Common Vulnerability Scoring System (CVSS) are popular for assessing adversary tactics and vulnerabilities.

While approaches to analyzing vulnerabilities and potential attacks vary according to an organization’s maturity, situational awareness is a prerequisite at any stage. The U.S. Army Field Manual defines the term like this: “Knowledge and understanding of the current situation which promotes timely, relevant and accurate assessment of friendly, enemy and other operations within the battlespace to facilitate decision making.”

In cybersecurity as in warfare, situational awareness requires a clear perception of the elements in an environment and their potential to cause future events. In some cases, the possibility of a future cyber attack can be averted by merely patching software with known vulnerabilities.

Intrusion detection systems can automate some degree of monitoring of networks and operating systems. Intrusion detection systems that are based on detecting malware signatures also can identify common attacks. They are, however, not effective at recognizing so-called zero-day malware, which has not yet been catalogued by security researchers. Intrusion detection based on malware signatures is also ineffective at detecting custom attacks, (i.e., a disgruntled employee who knows just enough Python or PowerShell to be dangerous. Sophisticated threat actors who slip through defences to gain network access can become insiders, with permission to view sensitive networks and files. In such cases, situational awareness is a prerequisite to mitigate damage.

Another strategy for intrusion detection systems is to focus on context and anomalies rather than malware signatures. Such systems could use machine learning to learn legitimate commands, use of messaging protocols and so forth. While this strategy overcomes the reliance on malware signatures, it can potentially trigger false alarms. Such a system can also detect so-called slow-rate attacks, a type of denial of service attack that gradually robs networking bandwidth but is more difficult to detect than volumetric attacks.

Respond Effectively to Cyber-Incidents

The foundation for successful cyber-incident response lies in having concrete security policies, architecture and processes. “Once you have a breach, it’s kind of too late,” said Deloitte’s Frank. “It’s what you do before that matters.”

That said, the goal of warding off all cyber-incidents, which range from violations of security policies and laws to data breaches, is not realistic. It is thus essential to implement short- and long-term plans for managing cybersecurity emergencies. Organizations should have contingency plans for addressing possible attacks, practising how to respond to them through wargaming exercises to improve their ability to mitigate some cyberattacks and develop effective, coordinated escalation measures for successful breaches.

There are several aspects of the zero trust model that enhance organizations’ ability to respond and recover from cyber events. “Network and micro-segmentation, for example, is a concept by which trust zones are created by organizations around certain classes or types of assets, restricting the blast radius of potentially destructive cyberattacks and limiting the ability for an attacker to move laterally within the environment,” Rafla said. Also, efforts to automate and orchestrate zero trust principles can enhance the efficiency of security operations, speeding efforts to mitigate attacks. “Repetitive and manual tasks can now be automated and proactive actions to isolate and remediate security threats can be orchestrated through integrated controls,” Rafla added.

Response to cyber-incidents involves coordinating multiple stakeholders beyond the security team. “Every business function could be impacted — marketing, customer relations, legal compliance, information technology, etc.,” Frank said.

A six-tiered model for cyber incident response from the SANS Institute contains the following steps:

- Preparation: Preparing the team to react to events ranging from cyberattacks to hardware failure and power outages.

- Identification: Determining if an operational anomaly should be classified as a cybersecurity incident, and how to respond to it.

- Containment: Segmenting compromised devices on the network long enough to limit damage in the event of a confirmed cybersecurity incident. Conversely, long-term containment measures involve hardening effective systems to allow them to enable normal operations.

- Eradication: Removing or restoring compromised systems. If a security team detects malware on an IoT device, for instance, this phase could involve reimaging its hardware to prevent reinfection.

- Recovery: Integrating previously compromised systems back into production and ensuring they operate normally after that. In addition to addressing the security event directly, recovery can involve crisis communications with external stakeholders such as customers or regulators.

- Lessons Learned: Documenting and reviewing the factors that led to the cyber-incident and taking steps to avoid future problems. Feedback from this step should create a feedback loop providing insights that support future preparation, identification, etc.

While the bulk of the SANS model focuses on cybersecurity operations, the last step should be a multidisciplinary process. Investing in cybersecurity liability insurance to offset risks identified after ongoing cyber-incident response requires support from upper management and the legal team. Ensuring compliance with the evolving regulatory landscape also demands feedback from the legal department.

A central practice that can prove helpful is documentation — not just for security incidents, but as part of ongoing cybersecurity assessment and strategy. Organizations with mature security documentation tend to be better positioned to deal with breaches.

“If you fully document your program — your policies, procedures, standards and training — that might put you in a more favourable position after a breach,” Frank explained. “If you have all that information summarized and ready, in the event of an investigation by a regulatory authority after an incident, it shows the organization has robust programs in place.”

Documenting security events and controls can help organizations become more proactive and more capable of embracing automation and machine learning tools. As they collect data, they should repeatedly ask how to make the most of it. KPMG’s Haward-Grau said cybersecurity teams should consider the following questions:

- What data should we focus on?

- What can we do to improve our operational decision making?

- How do we reduce our time and costs efficiently and effectively, given the nature of the reality in which we’re operating?

Ultimately, answering those questions may involve using machine learning or artificial intelligence technology, Haward- Grau said. “If your business is using machine learning or AI, you have to digitally enable them so that they can do what they want to do,” he said.

Finally, documenting security events and practices as they relate to IoT devices and beyond can be useful in evaluating the effectiveness of cybersecurity spending and provide valuable feedback for digital transformation programs. “Security is a foundational requirement that needs to be ingrained holistically in architecture and processes and governed by policies,” said Chander Damodaran, chief architect at Brillio, a digital consultancy firm. ”Security should be a common denominator.”

IoT Security

Recent legislation requires businesses to assume responsibility for protecting the Internet of Things (IoT) devices. “Security by Design” approaches are essential since successful applications deploy millions of units and analysts predict billions of devices deployed in the next five to ten years. The cost of fixing compromised devices later could overwhelm a business.

Security risks can never be eliminated: there is no single solution for all concerns, and the cost to counter every possible threat vector is prohibitively expensive. The best we can do is minimize the risk, and design devices and processes to be easily updatable.

It is best to assess damage potential and implement security methods accordingly. For example, for temperature and humidity sensors used in environmental monitoring, data protection needs are not as stringent as devices transmitting credit card information. The first may require anonymization for privacy, and the second may require encryption to prevent unauthorized access.

Overall Objectives

Senders and receivers must authenticate. IoT devices must transmit to the correct servers and ensure they receive messages from the correct servers.

Mission-critical applications, such as vehicle crash notification or medical alerts, may fail if the connection is not reliable. Lack of communication itself is a lack of security.

Connectivity errors can make good data unreliable, and actions on the content may be erroneous. It is best to select connectivity providers with strong security practices—e.g., whitelisting access and traffic segregation to prevent unauthorized communication.

IoT Security: 360-Degree Approach

Finally, only authorized recipients should access the information. In particular, privacy laws require extra care in accessing the information on individuals.

Data Chain

Developers should implement security best practices at all points in the chain. However, traditional IT security protects servers with access controls, intrusion detection, etc., the farther away from the servers that best practices are implemented, the less impact that remote IoT device breaches have on the overall application.

For example, compromised sensors might send bad data, and servers might take incorrect actions despite data filtering. Thus, gateways offer an ideal location for security with compute capacity for encryption and implement over-the-air (OTA) updates for security fixes.

Servers often automate responses on data content. Simplistic and automated responses to bad data could cascade into much greater difficulty. If devices transmit excessively, servers could overload and fail to provide timely responses to transmissions—retry algorithms resulting from network unavailability often create data storms.

IoT devices often use electrical power rather than batteries, and compromised units could continue to operate for years. Implementing over-the-air (OTA) functions for remotely disabling devices could be critical.

When a breach requires device firmware updates, OTA support is vital when devices are inaccessible or large numbers of units must be modified rapidly. All devices should support OTA, even if it increases costs—for example, adding memory for managing multiple “images” of firmware for updates.

In summary, IoT security best practices of authentication, encryption, remote device disable, and OTA for security fixes, along with traditional IT server protection, offers the best chance of minimizing risks of attacks on IoT applications.

Originally posted here.