machine learning (24)

For object detection projects, labeling your images with their corresponding bounding boxes and names is a tedious and time-consuming task, often requiring a human to label each image by hand. The Edge Impulse Studio has already dramatically decreased the amount of time it takes to get from raw images to a fully labeled dataset with the Data Acquisition Labeling Queue feature directly in your web browser. To make this process even faster, the Edge Impulse Studio is getting a new feature: AI-Assisted Labeling.

Automatically label common objects with YOLOv5.

Automatically label common objects with YOLOv5.

To get started, create a “Classify multiple objects” images project via the Edge Impulse Studio new project wizard or open your existing object detection project. Upload your object detection images to your Edge Impulse project’s training and testing sets. Then, from the Data Acquisition tab, select “Labeling queue.”

1. Using YOLOv5

By utilizing an existing library of pre-trained object detection models from YOLOv5 (trained with the COCO dataset), common objects in your images can quickly be identified and labeled in seconds without needing to write any code!

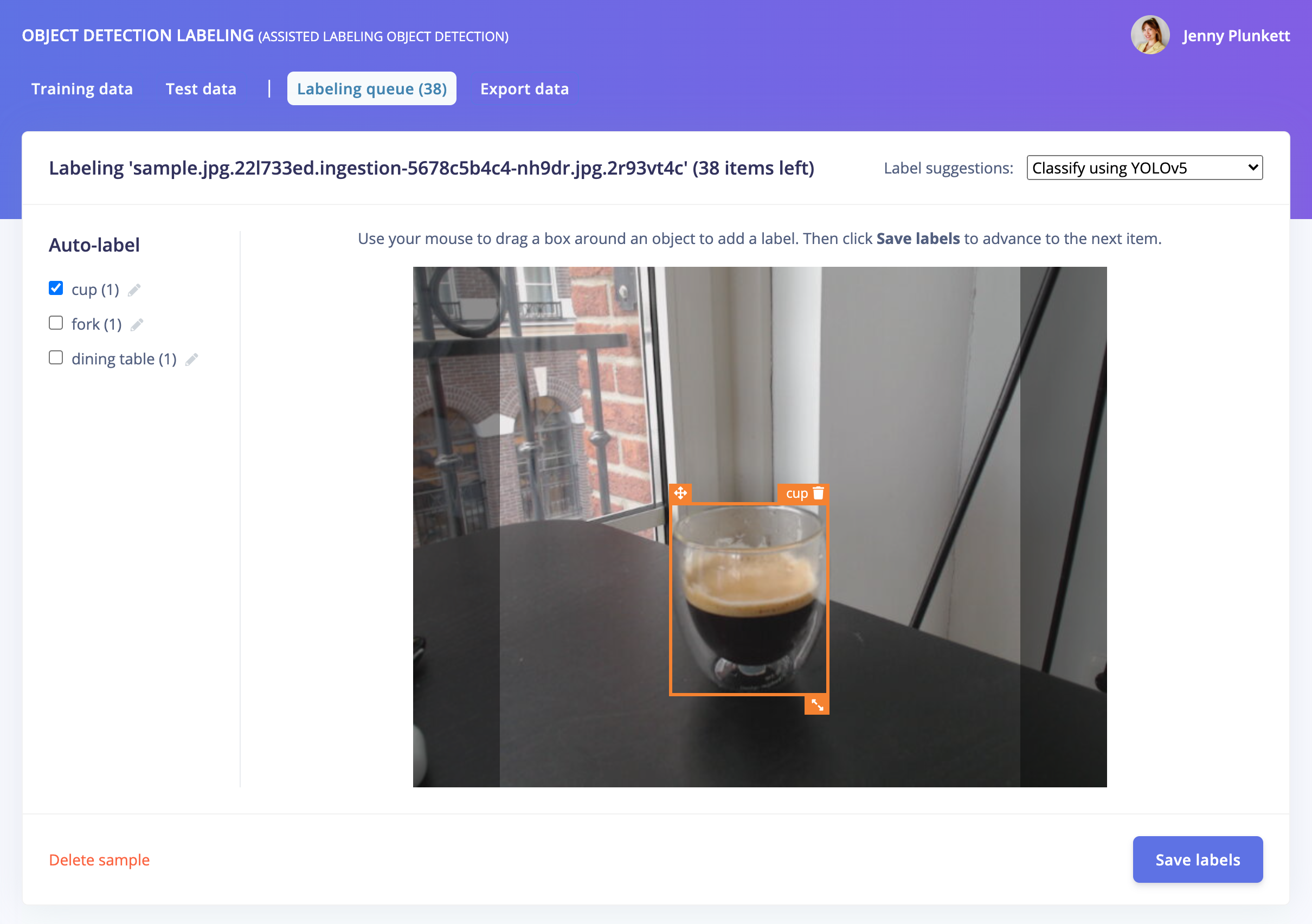

To label your objects with YOLOv5 classification, click the Label suggestions dropdown and select “Classify using YOLOv5.” If your object is more specific than what is auto-labeled by YOLOv5, e.g. “coffee” instead of the generic “cup” class, you can modify the auto-labels to the left of your image. These modifications will automatically apply to future images in your labeling queue.

Click Save labels to move on to your next raw image, and see your fully labeled dataset ready for training in minutes!

2. Using your own model

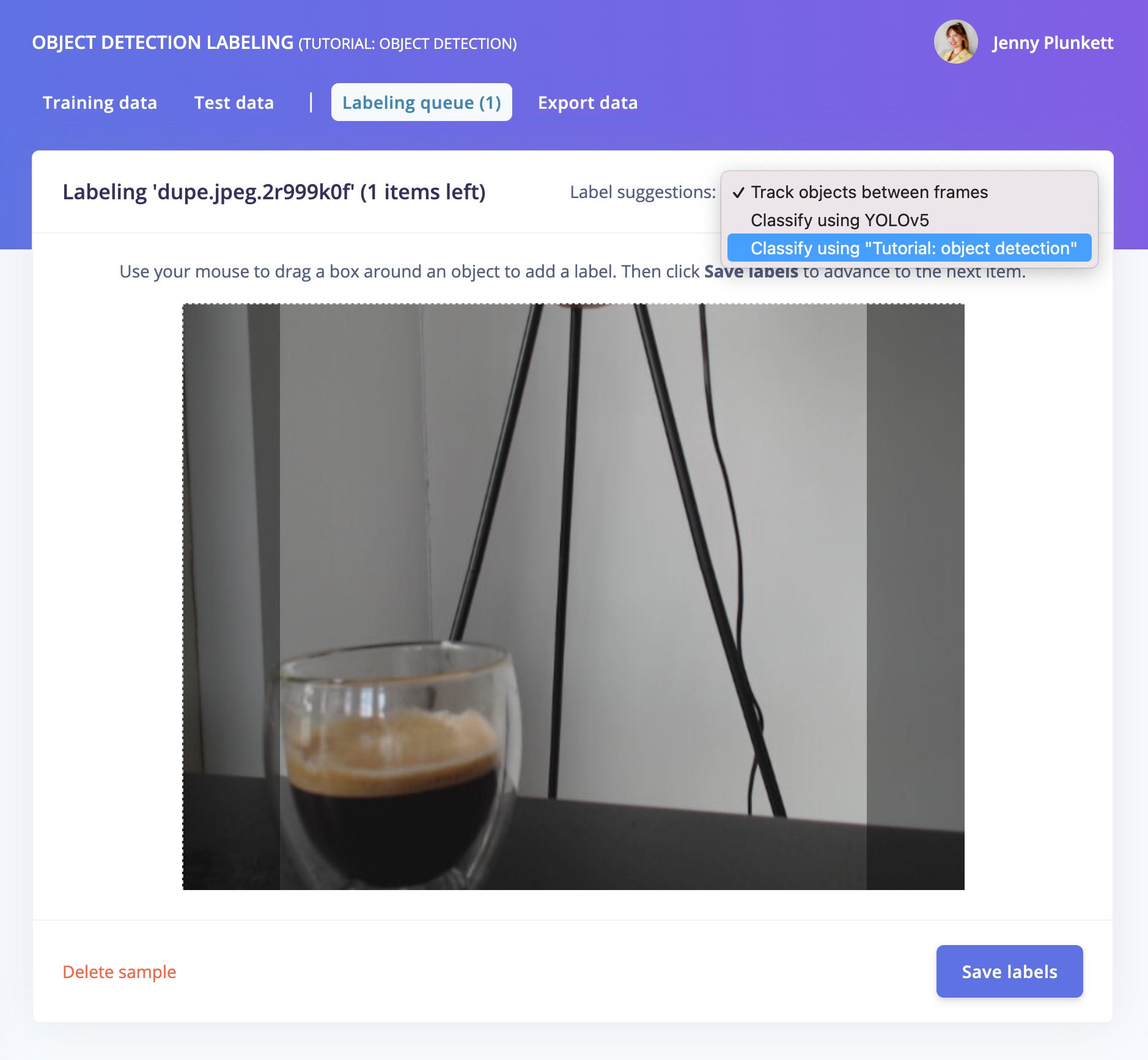

You can also use your own trained model to predict and label your new images. From an existing (trained) Edge Impulse object detection project, upload new unlabeled images from the Data Acquisition tab. Then, from the “Labeling queue”, click the Label suggestions dropdown and select “Classify using <your project name>”:

You can also upload a few samples to a new object detection project, train a model, then upload more samples to the Data Acquisition tab and use the AI-Assisted Labeling feature for the rest of your dataset. Classifying using your own trained model is especially useful for objects that are not in YOLOv5, such as industrial objects, etc.

Click Save labels to move on to your next raw image, and see your fully labeled dataset ready for training in minutes using your own pre-trained model!

3. Using object tracking

If you have objects that are a similar size or common between images, you can also track your objects between frames within the Edge Impulse Labeling Queue, reducing the amount of time needed to re-label and re-draw bounding boxes over your entire dataset.

Draw your bounding boxes and label your images, then, after clicking Save labels, the objects will be tracked from frame to frame:

Track and auto-label your objects between frames.

Track and auto-label your objects between frames.

Now that your object detection project contains a fully labeled dataset, learn how to train and deploy your model to your edge device: check out our tutorial!

Originally posted on the Edge Impulse blog by Jenny Plunkett - Senior Developer Relations Engineer.

The head is surely the most complex group of organs in the human body, but also the most delicate. The assessment and prevention of risks in the workplace remains the first priority approach to avoid accidents or reduce the number of serious injuries to the head. This is why wearing a hard hat in an industrial working environment is often required by law and helps to avoid serious accidents.

This article will give you an overview of how to detect that the wearing of a helmet is well respected by all workers using a machine learning object detection model.

For this project, we have been using:

- Edge Impulse Studi to acquire some custom data, visualize the data, train the machine learning model and validate the inference results.

- Part of this public dataset from Roboflow, where the images containing the smallest bounding boxes has been removed.

- Part of the Flicker-Faces-HQ (FFHQ) (under Creative Commons BY 2.0 license) to rebalance the classes in our dataset.

- Google Colab to convert the Yolo v5 PyTorch format from the public dataset to Edge Impulse Ingestion format.

- A Rasberry Pi, NVIDIA Jetson Nano or with any Intel-based Macbooks to deploy the inference model.

Before we get started, here are some insights of the benefits / drawbacks of using a public dataset versus collecting your own.

Using a public dataset is a nice-to-have to start developing your application quickly, validate your idea and check the first results. But we often get disappointed with the results when testing on your own data and in real conditions. As such, for very specific applications, you might spend much more time trying to tweak an open dataset rather than collecting your own. Also, remember to always make sure that the license suits your needs when using a dataset you found online.

On the other hand, collecting your own dataset can take a lot of time, it is a repetitive task and most of the time annoying. But, it gives the possibility to collect data that will be as close as possible to your real life application, with the same lighting conditions, the same camera or the same angle for example. Therefore, your accuracy in your real conditions will be much higher.

Using only custom data can indeed work well in your environment but it might not give the same accuracy in another environment, thus generalization is harder.

The dataset which has been used for this project is a mix of open data, supplemented by custom data.

First iteration, using only the public datasets

At first, we tried to train our model only using a small portion of this public dataset: 176 items in the training set and 57 items in the test set where we took only images containing a bounding box bigger than 130 pixels, we will see later why.

If you go through the public dataset, you can see that the entire dataset is strongly missing some “head” data samples. The dataset is therefore considered as imbalanced.

Several techniques exist to rebalance a dataset, here, we will add new images from Flicker-Faces-HQ (FFHQ). These images do not have bounding boxes but drawing them can be done easily in the Edge Impulse Studio. You can directly import them using the uploader portal. Once your data has been uploaded, just draw boxes around the heads and give it a label as below:

Now that the dataset is more balanced, with both images and bounding boxes of hard hats and heads, we can create an impulse, which is a mix of digital signal processing (DSP) blocks and training blocks:

In this particular object detection use case, the DSP block will resize an image to fit the 320x320 pixels needed for the training block and extract meaningful features for the Neural Network. Although the extracted features don’t show a clear separation between the classes, we can start distinguishing some clusters:

To train the model, we selected the Object Detection training block, which fine tunes a pre-trained object detection model on your data. It gives a good performance even with relatively small image datasets. This object detection learning block relies on MobileNetV2 SSD FPN-Lite 320x320.

According to Daniel Situnayake, co-author of the TinyML book and founding TinyML engineer at Edge Impulse, this model “works much better for larger objects—if the object takes up more space in the frame it’s more likely to be correctly classified.” This has been one of the reason why we got rid of the images containing the smallest bounding boxes in our import script.

After training the model, we obtained a 61.6% accuracy on the training set and 57% accuracy on the testing set. You also might note a huge accuracy difference between the quantized version and the float32 version. However, during the linux deployment, the default model uses the unoptimized version. We will then focus on the float32 version only in this article.

This accuracy is not satisfying, and it tends to have trouble detecting the right objects in real conditions:

Second iteration, adding custom data

On the second iteration of this project, we have gone through the process of collecting some of our own data. A very useful and handy way to collect some custom data is using our mobile phone. You can also perform this step with the same camera you will be using in your factory or your construction site, this will be even closer to the real condition and therefore work best with your use case. In our case, we have been using a white hard hat when collecting data. For example, if your company uses yellow ones, consider collecting your data with the same hard hats.

Once the data has been acquired, go through the labeling process again and retrain your model.

We obtain a model that is slightly more accurate when looking at the training performances. However, in real conditions, the model works far better than the previous one.

Finally, to deploy your model on yourA Rasberry Pi, NVIDIA Jetson Nano or your Intel-based Macbook, just follow the instructions provided in the links. The command line interface `edge-impulse-linux-runner` will create a lightweight web interface where you can see the results.

Note that the inference is run locally and you do not need any internet connection to detect your objects. Last but not least, the trained models and the inference SDK are open source. You can use it, modify it and integrate it to a broader application matching specifically to your needs such as stopping a machine when a head is detected for more than 10 seconds.

This project has been publicly released, feel free to have a look at it on Edge Impulse studio, clone the project and go through every steps to get a better understanding: https://studio.edgeimpulse.com/public/34898/latest

The essence of this use case is, Edge Impulse allows with very little effort to develop industry grade solutions in the health and safety context. Now this can be embedded in bigger industrial control and automation systems with a consistent and stringent focus on machine operations linked to H&S complaint measures. Pre-training models, which later can be easily retrained in the final industrial context as a step of “calibration,” makes this a customizable solution for your next project.

Originally posted on the Edge Impulse blog by Louis Moreau - User Success Engineer at Edge Impulse & Mihajlo Raljic - Sales EMEA at Edge Impulse

Only for specific jobs

Just a few decades ago, headsets were meant for use only with specific job functions – primarily B2B. They were used as simply extensions of communication devices, reserved for astronauts, mission control engineers, air traffic controllers, call center agents, fire fighters, etc. who all had mission critical communication to convey while their hands had to deal with something more important than holding a communication device. In the B2C consumers space you rarely saw anyone wearing headsets in public. The only devices you saw attached to one’s ears were hearing aids.

Tale of two cities: Telephony and music

Most headsets were used for communication purposes, which also referred to as ‘Telephony’ mode. As with most communications, this requires bi-directional audio. Except for serious audiophiles and audio professionals, headsets were not used for music consumption. Any type of half-duplex audio consumption was referred to as ‘Music' mode.

Deskphones and speakerphones

Within the enterprise, a deskphone was the primary communication device for a long time. Speakerphones were becoming a common staple in meeting rooms, facilitating active collaboration amongst geographically distributed team members. So, there were ‘handsets’ but no ‘headsets’ quite yet.

Mobile revolution: Communication and consumption

As the Internet and the browser were taking shape in the early ’90s, deskphones were getting untethered in the form of big and bulky cellular phones. At around the same time, a Body Area Network (BAN) wireless technology called Bluetooth was invented. Its original purpose was simply to replace the cords used for connecting a keyboard and mouse to the personal computer.

As cellular phones were slimming down and becoming more mainstream, scientists figured out how to use Bluetooth radio for short-range full-duplex audio communications as well. Fueled by rapid cell-phone proliferation, along with the need for convenient hands-free communication by enterprise executives and professionals (for whom hands-free communication while being mobile was important), monaural Bluetooth headsets started becoming a loyal companion to cell phones.

While headsets were used with various telephony devices for communications, portable analog music (Sony Walkman, anybody?) started giving way to portable digital music. Cue the iPod era. The portable music players primarily used simple wired speakers on a rope. These early ‘earbuds’ didn’t even have a microphone in them because they were meant solely for audio consumption – not for audio capture.

The app economy, softphones and SaaS

Mobile revolution transformed simple communication devices into information exchange devices and then more recently, into mini super computers that have applications to take care of functions served by numerous individual devices like a telephony device, camera, calculator, music player, etc. As narrowband networks gave way to broadband networks for both the wired and wireless worlds, ‘communication’ and ‘media consumption’ began to transform in a significant way as well.

Communication: Deskphones or ‘hard’-phones started being replaced by VoIP-based soft-phones. A new market segment called Unified Communications (UC) was born because of this hard- to soft-phone transition. UC has been a key growth driver for the enterprise headset market for the last several years, and it continues to show healthy growth. Enterprises could not part ways with circuit-switched telephony devices completely, but they started adopting packet-switched telephony services called soft-phones. So, UC communication device companies are effectively helping enterprises by being the bridge from ‘old’ to ‘new’ technology. UC has recently evolved into UC&C – where the second ‘C’ represents ‘Collaboration.’ Collaboration using audio and video (like Zoom or Teams calls) got a real shot in the arm because of the COVID-19-induced remote work scenario that has been playing out globally for the last year and a half.

Media consumption: ‘Static’ storage media (audio cassettes, VHS tapes, CDs, DVDs) and their corresponding media players, including portable digital music devices like iPods, were replaced by ‘streaming’ services in a swift fashion.

Why did this transformation matter to the headset world?

Communication & collaboration by the enterprise users as well as media consumption by consumers collided head-on. Because of this, monaural headsets have almost become irrelevant. Nearly all headsets today are binaural or stereo, and have microphone(s) in them.

This is because the same device needs to serve the purposes of both: consuming half-duplex audio when listening to music, podcasts, or watching movies or webinars, and enabling full-duplex audio for a telephone conversation, a conference call, or video conference.

Fewer form factors… more smarts

From: Very few companies building manifold headset form factors that catered to the needs of every diverse persona out there.

To: Quite a few companies (obviously, a handful of them a great deal more successful than the others) driving the headset space to effectively just two form factors:

- Tiny True Wireless Stereo (TWS) earbuds and

- Big binaural occluding cans!

Less hardware… more software

Such a trend has been in place for quite some time impacting several industries. Headsets are no exception. Ever so sophisticated semiconductor components and proliferation of miniaturized Microelectromechanical Systems, or MEMS in short, components have taken the place of numerous bulkier hardware components.

What do modern headsets primarily do with regards to audio?

- Render received audio in the wearer’s ear

- Capture spoken audio from the wearer’s mouth

- Calculate anti-noise and render it in the wearer’s ear (in noise-cancelling headsets)

Sounds straightforward, right? It is not as simple as it sounds – at least for enterprise-grade professional headsets. Audio is processed in the digital domain in all modern headsets using sophisticated digital signal processing techniques. DSP algorithms running on the DSP cores of the processors are the most compute-intensive aspects of these devices. Capture/transmit/record audio DSP is relatively more complicated than the render/receive/llayback audio DSP. Depending on the acoustic design (headset boom, number of microphones, speaker/microphone placement), audio performance requirements, and other audio feature requirements, the DSP workload varies.

Intelligence right at the edge!

Headsets are true edge devices. Most headset designs have severe constraints around several factors: cost, size, weight, power, MIPS, memory, etc.

Headsets are right at the horse’s mouth (pun intended) of massive trends and modern use cases like:

- Wake word detection for virtual private assistants (VPAs)

- Keyword detection for device control and various other data/analytics purposes

- Modern user interface (UI) techniques like voice-as-UI, touch-as-UI, and gestures-as-UI

- Transmit noise cancellation/suppression (TxNC or TxNS)

- Adaptive ambient noise cancellation (ANC) mode selection

- Real-time transcription assistance

- Ambient noise identification

- Speech synthesis, speaker identification, speaker authentication, etc.

Most importantly, note that there is immense end customer value for all these capabilities.

Until recently, even if one wanted to, very little could be done to support most of these advanced capabilities right in the headset. Just the features and functionalities that were addressable within the computational limits of the on-board DSP cores using traditional DSP techniques were all that could be supported.

Enter edge compute, AutoML, tinyML, and MLOps revolutions…

Several DSP-only workloads of the past are rapidly transitioning to an efficient hybrid model of DSP+ML workloads. Quite a few ML only capabilities that were not even possible using traditional DSP techniques are becoming possible right now as well. All of this is happening within the same constraints that existed before.

Silicon as well as software innovations are behind such possibilities. Silicon innovations are relatively slow to be adopted into device architectures at the moment, but they will be over time. Software innovations extract more value out of existing silicon architectures while helping converge on more efficient hardware architecture designs for next-generation products.

Thanks to embedded machine learning, tasks and features that were close to impossible are becoming a reality now. Production-grade Inference models with tiny program and data memory footprints in addition to impressive performance are possible today because of major advancements in AutoML and tinyML techniques. Building these models does not require massive amounts of data either. The ML-framework and automated yet flexible process offered by platforms like those from Edge Impulse make the ML model creation process simple and efficient compared to traditional methods of building such models.

Microphones and sensors galore

All headsets feature at least one microphone, and many feature multiple, sometimes up to 16 of them! The field of ML for audio is vast, and it is continuing to expand further. Many of the ML inferencing that was possible only at the cloud backends or sophisticated compute-rich endpoints are now fully possible in most of the resource-constrained embedded IoT silicon.

Microphones themselves are sensors, but many other sensors like accelerometers, capacitive touch, passive infrared (PIR), ultrasonic, radar, and ultra-wideband (UWB) are making their way into headsets to meet and exceed customer expectations. Spatial audio, aka 3D audio, is one such application that utilizes several sensors to give the end-user an immersive audio experience. Sensor fusion is the concept of utilizing data from multiple sensors concurrently to arrive at intelligent decisions. Sensor fusion implementations that use modern ML techniques have been shown to have impressive performance metrics compared to traditional non-ML methods.

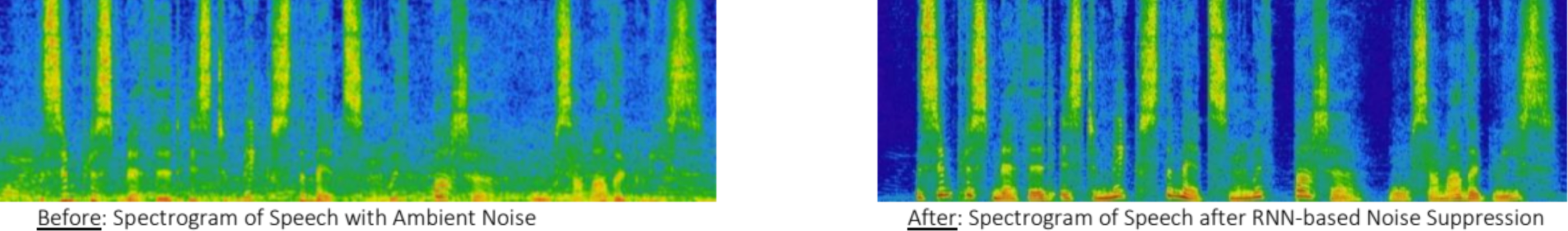

Transmit noise suppression (TxNS) has always been the holy grail of all premium enterprise headsets. It is an important aspect of enterprise collaboration. A magical combination of physical acoustic design – which is more art than science – combined with optimally tuned complex audio DSP algorithms implemented under severe MIPS, memory, latency, and other constraints. In recent years, some groundbreaking work has been done in utilizing recursive neural network (RNN) techniques to improve TxNS performance to levels that were never seen before. Because of their complexity and high-compute footprint, these techniques have been incorporated into devices that have mobile phone platform-like compute capabilities. The challenge of bringing such solutions to the resource-constrained embedded systems, such as enterprise headsets, while staying within the constraints laid out earlier, remains unsolved to a major extent. Advancements in embedded silicon technology, combined with tinyML/AutoML software innovations listed above, is helping address this and several other ML challenges.

Conclusion

Modern use cases that enable the hearables to become ‘smart’ are compelling. Cloud-based frameworks and tools necessary to build, iterate, optimize, and maintain high performance small footprint ML models to address these applications are readily available from entities like Edge Impulse. Any hearable entity that doesn’t take full advantage of this staggering advancement in technology will be at a competitive disadvantage.

Originally posted on the Edge Impulse blog by Arun Rajasekaran.

Edge Impulse has joined 1% for Planet, pledging to donate 1% of our revenue to support nonprofit organizations focused on the environment. To complement this effort we launched the ElephantEdge competition, aiming to create the world’s best elephant tracking device to protect elephant populations that would otherwise be impacted by poaching. In this similar vein, this blog will detail how Lacuna Space, Edge Impulse, a microcontroller and LoraWAN can promote the conservation of endangered species by monitoring bird calls in remote areas.

Over the past years, The Things Networks has worked around the democratization of the Internet of Things, building a global and crowdsourced LoraWAN network carried by the thousands of users operating their own gateways worldwide. Thanks to Lacuna Space’ satellites constellation, the network coverage goes one step further. Lacuna Space uses LEO (Low-Earth Orbit) satellites to provide LoRaWAN coverage at any point around the globe. Messages received by satellites are then routed to ground stations and forwarded to LoRaWAN service providers such as TTN. This technology can benefit several industries and applications: tracking a vessel not only in harbors but across the oceans, monitoring endangered species in remote areas. All that with only 25mW power (ISM band limit) to send a message to the satellite. This is truly amazing!

Most of these devices are typically simple, just sending a single temperature value, or other sensor reading, to the satellite - but with machine learning we can track much more: what devices hear, see, or feel. In this blog post we'll take you through the process of deploying a bird sound classification project using an Arduino Nano 33 BLE Sense board and a Lacuna Space LS200 development kit. The inferencing results are then sent to a TTN application.

Note: Access to the Lacuna Space program and dev kit is closed group at the moment. Get in touch with Lacuna Space for hardware and software access. The technical details to configure your Arduino sketch and TTN application are available in our GitHub repository.

Our bird sound model classifies house sparrow and rose-ringed parakeet species with a 92% accuracy. You can clone our public project or make your own classification model following our different tutorials such as Recognize sounds from audio or Continuous Motion Recognition.

Once you have trained your model, head to the Deployment section, select the Arduino library and Build it.

Import the library within the Arduino IDE, and open the microphone continuous example sketch. We made a few modifications to this example sketch to interact with the LS200 dev kit: we added a new UART link and we transmit classification results only if the prediction score is above 0.8.

Connect with the Lacuna Space dashboard by following the instructions on our application’s GitHub ReadMe. By using a web tracker you can determine when the next good time a Lacuna Space satellite will be flying in your location, then you can receive the signal through your The Things Network application and view the inferencing results on the bird call classification:

{

"housesparrow": "0.91406",

"redringedparakeet": "0.05078",

"noise": "0.03125",

"satellite": true,

}No Lacuna Space development kit yet? No problem! You can already start building and verifying your ML models on the Arduino Nano 33 BLE Sense or one of our other development kits, test it out with your local LoRaWAN network (by pairing it with a LoRa radio or LoRa module) and switch over to the Lacuna satellites when you get your kit.

Originally posted on the Edge Impulse blog by Aurelien Lequertier - Lead User Success Engineer at Edge Impulse, Jenny Plunkett - User Success Engineer at Edge Impulse, & Raul James - Embedded Software Engineer at Edge Impulse

Arm DevSummit 2020 debuted this week (October 6 – 8) as an online virtual conference focused on engineers and providing them with insights into the Arm ecosystem. The summit lasted three days over which Arm painted an interesting technology story about the current and future state of computing and where developers fit within that story. I’ve been attending Arm Techcon for more than half a decade now (which has become Arm DevSummit) and as I perused content, there were several take-a-ways I noticed for developers working on microcontroller based embedded systems. In this post, we will examine these key take-a-ways and I’ll point you to some of the sessions that I also think may pique your interest.

(For those of you that aren’t yet aware, you can register up until October 21st (for free) and still watch the conferences materials up until November 28th . Click here to register)

Take-A-Way #1 – Expect Big Things from NVIDIAs Acquisition of Arm

As many readers probably already know, NVIDIA is in the process of acquiring Arm. This acquisition has the potential to be one of the focal points that I think will lead to a technological revolution in computing technologies, particularly around artificial intelligence but that will also impact nearly every embedded system at the edge and beyond. While many of us have probably wondered what plans NVIDIA CEO Jensen Huang may have for Arm, the Keynotes for October 6th include a fireside chat between Jensen Huang and Arm CEO Simon Segars. Listening to this conversation is well worth the time and will help give developers some insights into the future but also assurances that the Arm business model will not be dramatically upended.

Take-A-Way #2 – Machine Learning for MCU’s is Accelerating

It is sometimes difficult at a conference to get a feel for what is real and what is a little more smoke and mirrors. Sometimes, announcements are real, but they just take several years to filter their way into the market and affect how developers build systems. Machine learning is one of those technologies that I find there is a lot of interest around but that developers also aren’t quite sure what to do with yet, at least in the microcontroller space. When we hear machine learning, we think artificial intelligence, big datasets and more processing power than will fit on an MCU.

There were several interesting talks at DevSummit around machine learning such as:

- Beyond ML – A Neuromorphic Approach to AI by Paul Isaacs

- tinyML Development with TensorFlow Lite for Microcontrollers and CMSIS-NN by Peter Warden and Fredrik Knutsson

- uTVM, an AI Compiler for Arm Microcontrollers by Thomas Gall

- Machine Learning Made Possible for Embedded Developers with Zero AI Skills by Francois de Rochebouet

Some of these were foundational, providing embedded developers with the fundamentals to get started while others provided hands-on explorations of machine learning with development boards. The take-a-way that I gather here is that the effort to bring machine learning capabilities to microcontrollers so that they can be leveraged in industry use cases is accelerating. Lots of effort is being placed in ML algorithms, tools, frameworks and even the hardware. There were several talks that mentioned Arm’s Cortex-M55 architecture that will include Helium technology to help accelerate machine learning and DSP processing capabilities.

Take-A-Way #3 – The Constant Need for Reinvention

In my last take-a-way, I eluded to the fact that things are accelerating. Acceleration is not just happening though in the technologies that we use to build systems. The very application domain that we can apply these technology domains to is dramatically expanding. Not only can we start to deploy security and ML technologies at the edge but in domains such as space and medical systems. There were several interesting talks about how technologies are being used around the world to solve interesting and unique problems such as protecting vulnerable ecosystems, mapping the sea floor, fighting against diseases and so much more.

By carefully watching and listening, you’ll notice that many speakers have been involved in many different types of products over their careers and that they are constantly having to reinvent their skill sets, capabilities and even their interests! This is what makes working in embedded systems so interesting! It is constantly changing and evolving and as engineers we don’t get to sit idly behind a desk. Just as Arm, NVIDIA and many of the other ecosystem partners and speakers show us, technology is rapidly changing but so are the problem domains that we can apply these technologies to.

Take-A-Way #4 – Mbed and Keil are Evolving

There are also interesting changes coming to the Arm toolchains and tools like Mbed and Keil MDK. In Reinhard Keil’s talk, “Introduction to an Open Approach for Low-Power IoT Development“, developers got an insight into the changes that are coming to Mbed and Keil with the core focus being on IoT development. The talk focused on the endpoint and discussed how Mbed and Keil MDK are being moved to an online platform designed to help developers move through the product development faster from prototyping to production. The Keil Studio Online is currently in early access and will be released early next year.

(If you are interested in endpoints and AI, you might also want to check-out this article on “How Do We Accelerate Endpoint AI Innovation? Put Developers First“)

Conclusions

Arm DevSummit had a lot to offer developers this year and without the need to travel to California to participate. (Although I greatly missed catching up with friends and colleagues in person). If you haven’t already, I would recommend checking out the DevSummit and watching a few of the talks I mentioned. There certainly were a lot more talks and I’m still in the process of sifting through everything. Hopefully there will be a few sessions that will inspire you and give you a feel for where the industry is headed and how you will need to pivot your own skills in the coming years.

Originaly posted here

This blog is the second part of a series covering the insights I uncovered at the 2020 Embedded Online Conference.

Last week, I wrote about the fascinating intersection of the embedded and IoT world with data science and machine learning, and the deeper co-operation I am experiencing between software and hardware developers. This intersection is driving a new wave of intelligence on small and cost-sensitive devices.

Today, I’d like to share with you my excitement around how far we have come in the FPGA world, what used to be something only a few individuals in the world used to be able to do, is at the verge of becoming more accessible.

I’m a hardware guy and I started my career writing in VHDL at university. I then started working on designing digital circuits with Verilog and C and used Python only as a way of automating some of the most tedious daily tasks. More recently, I have started to appreciate the power of abstraction and simplicity that is achievable through the use of higher-level languages, such as Python, Go, and Java. And I dream of a reality in which I’m able to use these languages to program even the most constrained embedded platforms.

At the Embedded Online Conference, Clive Maxfield talked about FPGAs, he mentions “in a world of 22 million software developers, there are only around a million core embedded programmers and even fewer FPGA engineers.” But, things are changing. As an industry, we are moving towards a world in which taking advantage of the capabilities of a reconfigurable hardware device, such as an FPGA, is becoming easier.

- What the FAQ is an FPGA, by Max the Magnificent, starts with what an FPGA is and the beauties of parallelism in hardware – something that took me quite some time to grasp when I first started writing in HDL (hardware description languages). This is not only the case for an FPGA, but it also holds true in any digital circuit. The cool thing about an FPGA is the fact that at any point you can just reprogram the whole board to operate in a different hardware configuration, allowing you to accelerate a completely new set of software functions. What I find extremely interesting is the new tendency to abstract away even further, by creating HLS (high-level synthesis) representations that allow a wider set of software developers to start experimenting with programmable logic.

- The concept of extending the way FPGAs can be programmed to an even wider audience is taken to the next level by Adam Taylor. He talks about PYNQ, an open-source project that allows you to program Xilinx boards in Python. This is extremely interesting as it opens up the world of FPGAs to even more software engineers. Adam demonstrates how you can program an FPGA to accelerate machine learning operations using the PYNQ framework, from creating and training a neural network model to running it on Arm-based Xilinx FPGA with custom hardware accelerator blocks in the FPGA fabric.

FPGAs always had the stigma of being hard and difficult to work on. The idea of programming an FPGA in Python, was something that no one had even imagined a few years ago. But, today, thanks to the many efforts all around our industry, embedded technologies, including FPGAs, are being made more accessible, allowing more developers to participate, experiment, and drive innovation.

I’m excited that more computing technologies are being put in the hands of more developers, improving development standards, driving innovation, and transforming our industry for the better.

If you missed the conference and would like to catch the talks mentioned above*, visit www.embeddedonlineconference.com

Part 3 of my review can be viewed by clicking here

In case you missed the previous post in this blog series, here it is:

*This blog only features a small collection of all the amazing speakers and talks delivered at the Conference!

I recently joined the Embedded Online Conference thinking I was going to gain new insights on embedded and IoT techniques. But I was pleasantly surprised to see a huge variety of sessions with a focus on modern software development practices. It is becoming more and more important to gain familiarity with a more modern software approach, even when you’re programming a constrained microcontroller or an FPGA.

Historically, there has been a large separation between application developers and those writing code for constrained embedded devices. But, things are now changing. The embedded world intersecting with the world of IoT, data science, and ML, and the deeper co-operation between software and hardware communities is driving innovation. The Embedded Online Conference, artfully organised by Jacob Beningo, represented exactly this cross-section, projecting light on some of the most interesting areas in the embedded world - machine learning on microcontrollers, using test-driven development to reduce bugs and programming an FPGA in Python are all things that a few years ago, had little to do with the IoT and embedded industry.

This blog is the first part of a series discussing these new and exciting changes in the embedded industry. In this article, we will focus on machine learning techniques for low-power and cost-sensitive IoT and embedded Arm-based devices.

Think like a machine learning developer

Considered for many year's an academic dead end of limited practical use, machine learning has gained a lot of renewed traction in recent years and it has now become one of the most interesting trends in the IoT space. TinyML is the buzzword of the moment. And this was a hot topic at the Embedded Online Conference. However, for embedded developers, this buzzword can sometimes add an element of uncertainty.

The thought of developing IoT applications with the addition of machine learning can seem quite daunting. During Pete Warden’s session about the past, present and future of embedded ML, he described the embedded and machine learning worlds to be very fragmented; there are so many hardware variants, RTOS’s, toolchains and sensors meaning the ability to compile and run a simple ‘hello world’ program can take developers a long time. In the new world of machine learning, there’s a constant churn of new models, which often use different types of mathematical operations. Plus, exporting ML models to a development board or other targets is often more difficult than it should be.

Despite some of these challenges, change is coming. Machine learning on constrained IoT and embedded devices is being made easier by new development platforms, models that work out-of-the-box with these platforms, plus the expertise and increased resources from organisations like Arm and communities like tinyML. Here are a few must-watch talks to help in your embedded ML development:

- New to the tinyML space is Edge Impulse, a start-up that provides a solution for collecting device data, building a model based around it and deploying it to make sense of the data directly on the device. CTO at Edge Impulse, Jan Jongboom talks about how to use a traditional signal processing pipeline to detect anomalies with a machine learning model to detect different gestures. All of this has now been made even easier by the announced collaboration with Arduino, which simplifies even further the journey to train a neural network and deploy it on your device.

- Arm recently announced new machine learning IP that not only has the capabilities to deliver a huge uplift in performance for low-power ML applications, but will also help solve many issues developers are facing today in terms of fragmented toolchains. The new Cortex-M55 processor and Ethos-U55 microNPU will be supported by a unified development flow for DSP and ML workloads, integrating optimizations for machine learning frameworks. Watch this talk to learn how to get started writing optimized code for these new processors.

- An early adopter implementing object detection with ML on a Cortex-M is the OpenMV camera - a low-cost module for machine vision algorithms. During the conference, embedded software engineer, Lorenzo Rizzello walks you through how to get started with ML models and deploying them to the OpenMV camera to detect objects and the environment around the device.

Putting these machine learning technologies in the hands of embedded developers opens up new opportunities. I’m excited to see and hear what will come of all this amazing work and how it will improve development standards and transform embedded devices of the future.

If you missed the conference and would like to catch the talks mentioned above*, visit www.embeddedonlineconference.com

*This blog only features a small collection of all the amazing speakers and talks delivered at the Conference!

Part 2 of my review can be viewed by clicking here

Artificial intelligence plays a significant role in the growth of the IoT sector. Artificial intelligence emulates the task more profoundly, Those performances were earlier limited to the human workforce, but now the Artificial intelligence development company has made a lot more noise in almost every sector by actually revolutionize everyone's lives.

The Internet of Things has been introduced in recent years with the objective to transfer data to other devices through the internet. IoT relies heavily on the internet connection that handles large volumes of data. IoT and artificial intelligence from the last two years are going hand in hand as these data helps in generating a lot more data that can be taken as actionable results.

Artificial intelligence helps IoT to handle the data on the basis of algorithms where they can convert data with the help of machine learning-based analytics. These AI Developers help the company to monitor operations that can give great insight from the data with greater accuracy.

Gartner predicts that by 2022, more than 80 % of enterprise IoT projects will include an AI component, up from only 10 % today.

How AI and IoT are Contribution to Growth

AI at work can access your IoT databases and know about your choices on the basis of last preferences, these personalized suggestions can easily make someone's life much easier. Here are some vital factors where Artificial intelligence affects the internet of things.

- Greater Revenue

IoT and AI are proving to be beneficial for most industries. These effects are enhanced by greater revenues and returns along with smart services-based solutions. With the extraordinary combination are transforming the workflows and other productivity of the businesses.

- Reduced Costs

AI and IoT ensure to reduce the operational cost by properly monitoring the smart sensors, meters, other fitted appliances. AI solution providers are providing impressive features that minimize the operational costs of households and business enterprises.

- Predictive Analysis

AI uses the power of analysis to forecast and minimize unpredictable incidents at the most basic level. It allows businesses to manage real-time data in determining the condition of machinery and equipment which are likely to break down in some time. Proper action can be taken prior to avoid any kind of damages or loss with an associated cost.

- Enhance Risk Management

A number of applications that integrate IoT with AI help companies to better understand and foresee a range of risks with the fast response, allowing them to better manage the safety of the workers, loss or any other cybersecurity attacks. This law enforcement identifies all the possible loss and tries to eliminate the threat before access.

How IoT impacts Industrial Sector

#1 Manufacturing

The manufacturing industries are integrating smart sensors with the help of IoT in order to perform enhance the efficiencies. These smart sensors help in detaching the threats be it aircraft, automobiles, household. By implementing Artificial intelligence solutions and smart sensors can help in diminishing the errors and also reduces the overall cost with the help of prognostic analysis.

#2 Smart Homes

IoT introduced the concept of a smart home where all devices are connected through a shared network. Through integrating this with AI, all of these apps will be able to understand the commands of their users and make smart decisions accordingly.

It can also help to reduce the cost of electricity by switching off devices when things are not used just by switching off the lights.

#3 Airlines

After the evolution of the latest IoT technology, all kinds of machines are run through sensors. We do not need to physically touch the devices to perform. These sensors are needed to identify the maintenance issue if any flight delays or cancellations, sensors automatically send messages to its passengers about schedule time or delays. Artificial intelligence solutions automatically transmit the issue, be it flight delays, cancellation or any other essential information.

Conclusion

AI and IoT cumulative impact would fundamentally restructure our personal and professional lives. Therefore, wise companies opt for a strategic and innovative strategy to control this incoming phenomenon and transform it into a huge opportunity for their company to escalate. These dependencies and AI and IoT are aiming to gather important issues that process and store data with the visionary approach that can easily escalate businesses. The IoT development company is reshaping the whole scenario by simply combining the AI and IoT functionalities.

- Image and Face Recognition: It understands the content of the image, classifies the image into various categories, detects individual objects and faces, detects labels and logos from the images.

- Language Translation: Translate text between thousands of languages, allows you to identify in which language any text that you need to analyze was written. Some APIs allows organizations to communicate with the customer in their language.

- Speech Recognition and Conversion: Today most of the customer service is handled by Chatbots with underlying APIs helping simple question and answer. Speech to text APIs are used to convert call center voice calls into text for further analysis.

- Text /Sentiment Analytics using NLP: With the rise of Social Media, consumers easily express and share their opinions about companies, products, services, events etc. Companies are interested in monitoring what people say about their brands in order to get feedback or enhance their marketing efforts. These APIs can identify, analyze, and extract the main content and sections from any web page. They further help in to analyze unstructured text for sentiment analysis, key phrase extraction, language detection and topic detection. There are some tools also helps in spam detection.

- Prediction: These APIs, as the name suggests helps to predict and find out patterns in the data. Typical examples are Fraud detection, customer churn, predictive maintenance, recommender systems and forecasting etc.