Devices (332)

In this IoT Central Video Feature, we present Jacob Sorber's video, "How to Get Started Learning Embedded Systems." Jacob is a computer scientist, researcher, teacher, and Internet of Things enthusiast. He teaches systems and networking courses at Clemson University and leads the PERSIST research lab. His “get started” videos are valuable for those early in their practice.

From Jacob: I've been meaning to start making more embedded systems videos — that is, computer science videos oriented to things you don't normally think of as computers (toys, robots, machines, cars, appliances). I hope this video helps you take the first step.

Helium, the company behind one of the world’s first peer-to-peer wireless networks, is announcing the introduction of Helium Tabs, its first branded IoT tracking device that runs on The People’s Network. In addition, after launching its network in 1,000 cities in North America within one year, the company is expanding to Europe to address growing market demand with Helium Hotspots shipping to the region starting July 2020.

Since its launch in June 2019, Helium quickly grew its footprint with Hotspots covering more than 700,000 square miles across North America. Helium is now expanding to Europe to allow for seamless use of connected devices across borders. Powered by entrepreneurs looking to own a piece of the people-powered network, Helium’s open-source blockchain technology incentivizes individuals to deploy Hotspots and earn Helium (HNT), a new cryptocurrency, for simultaneously building the network and enabling IoT devices to send data to the Internet. When connected with other nearby Hotspots, this acts as the backbone of the network.

“We’re excited to launch Helium Tabs at a time where we’ve seen incredible growth of The People’s Network across North America,” said Amir Haleem, Helium’s CEO and co-founder. “We could not have accomplished what we have done, in such a short amount of time, without the support of our partners and our incredible community. We look forward to launching The People’s Network in Europe and eventually bringing Helium Tabs and other third-party IoT devices to consumers there.”

Introducing Helium Tabs that Run on The People’s Network

Unlike other tracking devices,Tabs uses LongFi technology, which combines the LoRaWAN wireless protocol with the Helium blockchain, and provides network coverage up to 10 miles away from a single Hotspot. This is a game-changer compared to WiFi and Bluetooth enabled tracking devices which only work up to 100 feet from a network source. What’s more, due to Helium’s unique blockchain-based rewards system, Hotspot owners will be rewarded with Helium (HNT) each time a Tab connects to its network.

In addition to its increased growth with partners and customers, Helium has also seen accelerated expansion of its Helium Patrons program, which was introduced in late 2019. All three combined have helped to strengthen its network.

Patrons are entrepreneurial customers who purchase 15 or more Hotspots to help blanket their cities with coverage and enable customers, who use the network. In return, they receive discounts, priority shipping, network tools, and Helium support. Currently, the program has more than 70 Patrons throughout North America and is expanding to Europe.

Key brands that use the Helium Network include:

- Nestle, ReadyRefresh, a beverage delivery service company

- Agulus, an agricultural tech company

- Conserv, a collections-focused environmental monitoring platform

Helium Tabs will initially be available to existing Hotspot owners for $49. The Helium Hotspot is now available for purchase online in Europe for €450.

To participate in the 2020 IoT Developer Survey, organized by the Eclipse IoT Working Group, simply click here. This survey should take less than 10 minutes to complete.

This blog is the second part of a series covering the insights I uncovered at the 2020 Embedded Online Conference.

Last week, I wrote about the fascinating intersection of the embedded and IoT world with data science and machine learning, and the deeper co-operation I am experiencing between software and hardware developers. This intersection is driving a new wave of intelligence on small and cost-sensitive devices.

Today, I’d like to share with you my excitement around how far we have come in the FPGA world, what used to be something only a few individuals in the world used to be able to do, is at the verge of becoming more accessible.

I’m a hardware guy and I started my career writing in VHDL at university. I then started working on designing digital circuits with Verilog and C and used Python only as a way of automating some of the most tedious daily tasks. More recently, I have started to appreciate the power of abstraction and simplicity that is achievable through the use of higher-level languages, such as Python, Go, and Java. And I dream of a reality in which I’m able to use these languages to program even the most constrained embedded platforms.

At the Embedded Online Conference, Clive Maxfield talked about FPGAs, he mentions “in a world of 22 million software developers, there are only around a million core embedded programmers and even fewer FPGA engineers.” But, things are changing. As an industry, we are moving towards a world in which taking advantage of the capabilities of a reconfigurable hardware device, such as an FPGA, is becoming easier.

- What the FAQ is an FPGA, by Max the Magnificent, starts with what an FPGA is and the beauties of parallelism in hardware – something that took me quite some time to grasp when I first started writing in HDL (hardware description languages). This is not only the case for an FPGA, but it also holds true in any digital circuit. The cool thing about an FPGA is the fact that at any point you can just reprogram the whole board to operate in a different hardware configuration, allowing you to accelerate a completely new set of software functions. What I find extremely interesting is the new tendency to abstract away even further, by creating HLS (high-level synthesis) representations that allow a wider set of software developers to start experimenting with programmable logic.

- The concept of extending the way FPGAs can be programmed to an even wider audience is taken to the next level by Adam Taylor. He talks about PYNQ, an open-source project that allows you to program Xilinx boards in Python. This is extremely interesting as it opens up the world of FPGAs to even more software engineers. Adam demonstrates how you can program an FPGA to accelerate machine learning operations using the PYNQ framework, from creating and training a neural network model to running it on Arm-based Xilinx FPGA with custom hardware accelerator blocks in the FPGA fabric.

FPGAs always had the stigma of being hard and difficult to work on. The idea of programming an FPGA in Python, was something that no one had even imagined a few years ago. But, today, thanks to the many efforts all around our industry, embedded technologies, including FPGAs, are being made more accessible, allowing more developers to participate, experiment, and drive innovation.

I’m excited that more computing technologies are being put in the hands of more developers, improving development standards, driving innovation, and transforming our industry for the better.

If you missed the conference and would like to catch the talks mentioned above*, visit www.embeddedonlineconference.com

Part 3 of my review can be viewed by clicking here

In case you missed the previous post in this blog series, here it is:

*This blog only features a small collection of all the amazing speakers and talks delivered at the Conference!

Recovering from a system failure or a software glitch can be no easy task. The longer the fault occurs the harder it can be to identify and recover. The use of an external watchdog is an important and critical tool in the embedded systems engineer toolbox. There are five tips that should be taken into account when designing a watchdog system.

Tip #1 – Monitor a heartbeat

The simplest function that an external watchdog can have is to monitor a heartbeat that is produced by the primary application processor. Monitoring of the heartbeat should serve two distinct purposes. First, the microcontroller should only generate the heartbeat after functional checks have been performed on the software to ensure that it is functioning. Second, the heartbeat should be able to reveal if the real-time response of the system has been jeopardized.

Monitoring the heartbeat for software functionality and real-time response can be done using a simple, “dumb” external watchdog. The external watchdog should have the capability to assign a heartbeat period along with a window that the heartbeat must appear within. The purpose of the heartbeat window is to allow the watchdog to detect that the real-time response of the system is compromised. In the event that either functional or real-time checks fail the watchdog then attempts to recover the system through a reset of the application processor.

Tip #2 – Use a low capability MCU

External watchdogs that can be to monitor a heartbeat are relatively low cost but can severely limit the capabilities and recovery possibilities of the watchdog system. A low capability microcontroller can cost nearly the same amount as an external watchdog timer so why not add some intelligence to the watchdog and use a microcontroller. The microcontroller firmware can be developed to fulfill the windowed heartbeat monitoring with the addition of so much more. A “smart” watchdog like this is sometimes referred to as a supervisor or safety watchdog and has actually been used for many years in different industries such as automotive. Generally a microcontroller watchdog has been reserved for safety critical applications but given the development tools and the cost of hardware it can be cost effective in other applications as well.

Tip #3 – Supervise critical system functions

The decision to use a small microcontroller as a watchdog opens nearly endless possibilities of how the watchdog can be used. One of the first roles of a smart watchdog is usually to supervise critical system functions such as a system current or sensor state. One example of how a watchdog could supervise a current would be to take an independent measurement and then provide that value to the application processor. The application processor could then compare its own reading to that of the watchdog. If there were disagreement between the two then the system would execute a fault tree that was deemed to be appropriate for the application.

Tip #4 – Observe a communication channel

Sometimes an embedded system can appear to be operating as expected to the watchdog and the application processor but from an external observer be in a non-responsive state. In such cases it can be useful to tie the smart watchdog to a communication channel such as a UART. When the watchdog is connected to a communication channel it not only monitor channel traffic but even commands that are specific to the watchdog. A great example of this is a watchdog designed for a small satellite that monitors radio communications between the flight computer and ground station. If the flight computer becomes non-responsive to the radio, a command could be sent to the watchdog that is then executed and used to reset the flight computer.

Tip #5 – Consider an externally timed reset function

The question of who is watching the watchdog is undoubtedly on the minds of many engineers when using a microcontroller for a watchdog. Using a microcontroller to implement extra features adds some complexity and a new software element to the system. In the event that the watchdog goes off into the weeds how is the watchdog going to recover? One option would be to use an external watchdog timer that was discussed earlier. The smart watchdog would generate a heartbeat to keep itself from being reset by the watchdog timer. Another option would be to have the application processor act as the watchdog for the watchdog. Careful thought needs to be given to the best way to ensure both processors remain functioning as intended.

Conclusion

The purpose of the smart watchdog is to monitor the system and the primary microcontroller to ensure that they operate as expected. During the design of a system watchdog it can be very tempting to allow the number of features supported to creep. Developers need to keep in mind that as the complexity of the smart watchdog increases so does the probability that the watchdog itself will contain potential failure modes and bugs. Keeping the watchdog simple and to the minimum necessary feature set will ensure that it can be exhaustively tested and proven to work.

Originally Posted here

I recently joined the Embedded Online Conference thinking I was going to gain new insights on embedded and IoT techniques. But I was pleasantly surprised to see a huge variety of sessions with a focus on modern software development practices. It is becoming more and more important to gain familiarity with a more modern software approach, even when you’re programming a constrained microcontroller or an FPGA.

Historically, there has been a large separation between application developers and those writing code for constrained embedded devices. But, things are now changing. The embedded world intersecting with the world of IoT, data science, and ML, and the deeper co-operation between software and hardware communities is driving innovation. The Embedded Online Conference, artfully organised by Jacob Beningo, represented exactly this cross-section, projecting light on some of the most interesting areas in the embedded world - machine learning on microcontrollers, using test-driven development to reduce bugs and programming an FPGA in Python are all things that a few years ago, had little to do with the IoT and embedded industry.

This blog is the first part of a series discussing these new and exciting changes in the embedded industry. In this article, we will focus on machine learning techniques for low-power and cost-sensitive IoT and embedded Arm-based devices.

Think like a machine learning developer

Considered for many year's an academic dead end of limited practical use, machine learning has gained a lot of renewed traction in recent years and it has now become one of the most interesting trends in the IoT space. TinyML is the buzzword of the moment. And this was a hot topic at the Embedded Online Conference. However, for embedded developers, this buzzword can sometimes add an element of uncertainty.

The thought of developing IoT applications with the addition of machine learning can seem quite daunting. During Pete Warden’s session about the past, present and future of embedded ML, he described the embedded and machine learning worlds to be very fragmented; there are so many hardware variants, RTOS’s, toolchains and sensors meaning the ability to compile and run a simple ‘hello world’ program can take developers a long time. In the new world of machine learning, there’s a constant churn of new models, which often use different types of mathematical operations. Plus, exporting ML models to a development board or other targets is often more difficult than it should be.

Despite some of these challenges, change is coming. Machine learning on constrained IoT and embedded devices is being made easier by new development platforms, models that work out-of-the-box with these platforms, plus the expertise and increased resources from organisations like Arm and communities like tinyML. Here are a few must-watch talks to help in your embedded ML development:

- New to the tinyML space is Edge Impulse, a start-up that provides a solution for collecting device data, building a model based around it and deploying it to make sense of the data directly on the device. CTO at Edge Impulse, Jan Jongboom talks about how to use a traditional signal processing pipeline to detect anomalies with a machine learning model to detect different gestures. All of this has now been made even easier by the announced collaboration with Arduino, which simplifies even further the journey to train a neural network and deploy it on your device.

- Arm recently announced new machine learning IP that not only has the capabilities to deliver a huge uplift in performance for low-power ML applications, but will also help solve many issues developers are facing today in terms of fragmented toolchains. The new Cortex-M55 processor and Ethos-U55 microNPU will be supported by a unified development flow for DSP and ML workloads, integrating optimizations for machine learning frameworks. Watch this talk to learn how to get started writing optimized code for these new processors.

- An early adopter implementing object detection with ML on a Cortex-M is the OpenMV camera - a low-cost module for machine vision algorithms. During the conference, embedded software engineer, Lorenzo Rizzello walks you through how to get started with ML models and deploying them to the OpenMV camera to detect objects and the environment around the device.

Putting these machine learning technologies in the hands of embedded developers opens up new opportunities. I’m excited to see and hear what will come of all this amazing work and how it will improve development standards and transform embedded devices of the future.

If you missed the conference and would like to catch the talks mentioned above*, visit www.embeddedonlineconference.com

*This blog only features a small collection of all the amazing speakers and talks delivered at the Conference!

Part 2 of my review can be viewed by clicking here

All the representations I’ve seen regarding social distancing guidelines for engineers have depicted what appear to be two male members of the species, which is “So mid-20th century, my dear!”

by Jack Ganssle

jack@ganssle.com

Recently our electric toothbrush started acting oddly – differently from before. I complained to Marybeth who said, “I think it’s in the wrong mode.”

Really? A toothbrush has modes?

We in the embedded industry have created a world that was unimaginable prior to the invention of the microprocessor. Firmware today controls practically everything, from avionics to medical equipment to cars to, well everything.

And toothbrushes.

But we’re working too hard at it. Too many of us use archaic development strategies that aren’t efficient. Too many of us ship code with too many errors. That's something that can, and must, change.

Long ago the teachings of Deming and Juran revolutionized manufacturing. One of Deming's essential insights was that fixing defects will never lead to quality. Quality comes from correct design rather than patches applied on the production line. And focusing on quality lowers costs.

The software industry never got that memo.

The average embedded software project devotes 50% of the schedule to debugging and testing the code. It's stunning that half of the team’s time is spent finding and fixing mistakes.

Test is hugely important. But, as Dijkstra observed, testing can only prove the presence of errors, not the absence of bugs.

Unsurprisingly, and mirroring Deming's tenets, it has repeatedly been shown that a focus on fixing bugs will never lead to a quality product - all that will do is extend the schedule and insure defective code goes out the door.

Focusing on quality has another benefit: the project gets done faster. Why? That 50% of the schedule used to deal with bugs gets dramatically shortened. We shorten the schedule by not putting the bugs in in the first place.

High quality code requires a disciplined approach to software engineering - the methodical use of techniques and approaches long known to work. These include inspection of work products, using standardized ways to create the software, seeding code with constructs that automatically catch errors, and using various tools that scan the code for defects. Nothing that is novel or unexpected, nothing that a little Googling won't reveal. All have a long pedigree of studies proving their efficacy.

Yet only one team out of 50 makes disciplined use of these techniques.

What about metrics? Walk a production line and you'll see the walls covered with charts showing efficiency, defect rates, inventory levels and more. Though a creative discipline like engineering can't be made as routine as manufacturing, there are a lot of measurements that can and must be used to understand the team's progress and the product's quality, and to drive the continuous improvement we need.

Errors are inevitable. We will ship bugs. But we need a laser-like focus on getting the code right. How right? We have metrics; we know how many bugs the best and mediocre teams ship. Defect Removal Efficiency is a well-known metric used to evaluate quality of shipped code; it's the percentage of the entire universe of bugs found in a product that were removed prior to shipping (it's measured until 90 days after release). The very best teams, representing just 0.4% of the industry, eliminates over 99% of bugs pre-shipment. Most embedded groups only removed 95%.

Where does your team stand on this scale? Can one control quality if it isn’t measured?

We have metrics about defect injection rates, about where in the lifecycle they are removed, about productivity vs. any number of parameters and much more. Yet few teams collect any numbers.

Engineering without numbers isn’t engineering. It’s art.

Want to know more about metrics and quality in software engineering? Read any of Capers Jones’ books. They are dense, packed with tables of numbers, and sometimes difficult as the narrative is not engaging, but they paint a picture of what we can measure and how differing development activities effect errors and productivity.

Want to understand where the sometimes-overhyped agile methods make sense? Read Agile! by Bertrand Meyer and Balancing Agility and Discipline by Barry Boehm and Richard Turner.

Want to learn better ways to schedule a project and manage requirements? Read any of Karl Wiegers’ books and articles.

The truth is that we know of better ways to get great software done more efficiently and with drastically reduced bug rates.

When will we start?

Jack Ganssle has written over 1000 articles and six books about embedded systems, as well as one about his sailing fiascos. He has started and sold three electronics companies. He welcomes dialog at jack@ganssle.com or at www.ganssle.com.

In today’s economy, most software businesses are looking to provide a new high-performing application along with a seamless customer experience. Kubernetes is one of the emerging platforms that enables companies to run and manage containerized applications globally. Before we deep dive into the details of Kubernetes lets understand first what it means.

What is Kubernetes?

Kubernetes (also known as k8s) is an open-source platform (developed by Google) for managing containerized applications across multiple servers, providing basic frameworks for deploying, maintaining, and scaling applications.

According to a report by Gartner, by 2022 more than 75 % organizations globally will be running containerized applications.

Kubernetes can run within the public cloud or on premise environment. Cloud computing service providers like AWS, Microsoft Azure, and GCP provides managed solutions using Kubernetes that enable customers to start up K8s apps fast and operate efficiently.

Know more about CloudOps Services

So how Kubernetes is benefiting large enterprises?

Here are five primary business capabilities that are driven by Kubernetes and its benefits for large enterprises

- Faster app development/deployment

- Reducing resource costs

- Workload scalability

- Multi-cloud flexibility

- Effective cloud migration

1. Faster app development/deployment

Kubernetes makes use of micro-services as its primary key point for deploying the applications. Using Kubernetes, you can split the IT team into smaller teams, where one will be able to focus on smaller services, and these teams will be able to perform well as they focus on a particular functional area resulting into saving a lot of time.

The APIs among these micro services reduce the sum to build and deploy cross-team communications. You can easily scale-up or scale down the application as per the requirement. Kubernetes allows access to storage from different providers like AWS and Microsoft Azure and it helps to communicate faster between the containers.

2. Reducing IT and resources expenditures

If you are running a business on a large scale, Kubernetes will help you to reduce your infrastructure cost significantly. Integrating apps with your cloud and hardware resources, kubernetes makes a container-based architecture possible. The administrators of the application before Kubernetes often over-provisioned the infrastructure for handling unexpected spikes or simply just because it was not possible to handle such difficult situation manually and scale up the containerized application.

Kubernetes considers the available resources and smartly schedules and tightly packs the containers. If the users of an app or software increases then it automatically adds more processing power so that more users can be active, which ultimately helps your developers to focus on other productive activities.

3. Workload Scalability

As the containers are lightweight by design, and they can be created easily in seconds. It is easy to breakdown your application into individual components with their functions. Therefore, you can quickly scale up to help you respond immediately, e.g. an e-commerce app during festivals or sales experiences massive traffic and less during regular days. In such situations, what we need is a solution that will scale up the application when users are buying more goods and scale down when load decreases.

Kubernetes is not only helping in scaling up the infrastructure metrics but also helps in resource utilization metrics, and custom metrics to scale the process.

4. Effective cloud migration

Kubernetes is being adopted by all major enterprises for moving the app or web service to container-based cloud environment. The cloud infrastructure is not only stable but also scalable, and it also helps you in reducing operational stress and cost. Kubernetes provide easy and seamless rehosting of an app, or even if you want to make some changes, it can be done quickly and your app will run smoothly. Kubernetes provides flexibility for whatever changes you want to make in the application.

Kubernetes offers a more straightforward and customary way of transferring your application from on premise to cloud, as it runs through all scenarios reliably on premise and clouds like AWS, Azure, and GCP.

There are 3 predefined paths for migration of your app to Kubernetes:

- Migrating apps to Kubernetes on premise: In this process of migration, our focus is more on re-platforming your apps to containers and bringing them to the Kubernetes orchestration.

- Rehosting app: Rehosting is simply taking your app from one cloud and shifting it to Kubernetes cluster. There is no need to make any changes, and the app is just shifted as it is. It is the fastest way to migrate to Kubernetes.

- Refactoring: It involves re-architecturing the application for taking complete benefit of the cloud environment. Now that the application is on the cloud, the developer can make the changes as per the cloud environment and its service. It is the most advanced way of migration of an app to Kubernetes.

Get to know IoT Development services

5. Multi-cloud flexibility

With the help of Kubernetes it is easier for enterprises to run their applications or softwares on public cloud or any combination of either private or public cloud. As the project is 100% open source, it gives you more flexibility. You decide if you want help from the external experts to implement and maintain your Kubernetes services, and you can easily switch your services provider if it is not giving good service.

Today using Kubernetes it is possible to achieve multi-cloud and hybrid-cloud promises. A lot of companies already use and will continue to use a multi-cloud system in the near future.

Kubernetes makes it easier for enterprises who are running their apps on any public cloud service or with public or private cloud. Kubernetes is the best fit if you are using its dedicated features, and leveraging it for easy migration of the apps to improve your ROI.

The advent of Industry has marked the rise of IoT - the Internet of Things as one of the most significant shifts in the global devices market. It refers to the use of internet connectivity among the electric devices and systems to execute a joint function autonomously.

We can find an uncanny resemblance to this concept in the movie Home Alone 4 (2002), where the idea of a smart home fad was beautifully narrated with its pros and cons that will turn into a reality soon. Two decades down the line, we are on the brink of the IoT revolution, which will result in increasing the living standards globally. Dive in deeper to explore the current happenings and future horizons.

Current Market Scenario and the Probable Stakeholders

The potential of IoT applications is widely recognized by both enterprises, retail consumers, and government agencies as it will integrate the cyber-physical systems with internet connectivity in order to interact with the surrounding through sensors and manipulating devices. Business Insider estimates the value of this tech innovation to be $1.7 trillion in the year 2019. The use of the Internet of Things will reduce the need for human effort in various tasks and provide controlled environments for achieving the desired results.

The investment done by the tech giants is illustrated in the below figure:

(source: Forbes)

Some Famous User Cases Which are Expected to Take off Exponentially

Manufacturing Sector

The companies like ABB, Airbus, Shell, and the long-term IoT pioneer Caterpillar have been proactively pushing for such innovations in building safer workplaces that require fewer human interactions in hazardous work, which is also beneficial to the profitability of the business. It will also find great reception in predictive maintenance scheduling and inventory management.

Asset Management

The businesses are looking forward to replacing the GPS in order to increase the efficiency of tracking company-owned devices through internet connectivity. This will also reduce the lags in logistics and provide accurate details for the consumables, as in the case of printers. Another example would be integrating the payroll software with the employee vehicles to reimburse traveling claims.

Agriculture

The use of IoT to develop smart greenhouse farms with autonomous controlled environments will prove to be a boon for the agriculture industry as it will help in boosting crop production. Smart irrigation will control the soil quality, moisture content, atmospheric gas contents, and monitor plant health without any human intervention. The farm equipment will also be controlled in greenhouse farms with minimal need for human support.

Smart Homes

They are one of the largest areas where the revolution is expected to unravel the disruption in the electronic devices market. Google Nest, Ecobee, and Netatmo being the big fishes in the business. Everything including from your regular home appliances like fridge, television, ambiance and temperature control system, music system, and utilities are connected over the internet and controlled by remote servers. The user can interact with them using their smartphones. The house security is also connected with the central system.

The effect on Living Standards

When we look at the current scenario, many of these tasks, when automated, the people currently dealing with hazards related to their respective fields such as heavy machinery or agriculture can work in a much more safer environment. Smart homes will increase the quality of life and help households in saving money since energy consumption is streamlined while providing a luxurious experience. Businesses will experience higher profitability and increased morale of the employees owing to the techno-savvy environment.

The Development of Newer Devices through Prototyping

Before the application is made, it is essential to test the reliability of all the devices since they will be used under different conditions and other manufacturer’s devices too. Also, the addition of newer devices shall also be considered. The use of prototypes in test conditions will prove to be an economical alternative to full-fledged testing. This will include the use of sensors and motion actuation systems with minimal cosmetic additions and cladding.

The following considerations will affect the development of future-ready electronic gadgets compatible with IoT applications:

- Safety against web-based third-party cyber attacks.

- Modularized designs with programmable memories.

- Standardization of source code libraries to facilitate interoperability.

- Confirmation of regional government regulations.

- Operational conditions.

The modern tech innovations such as AI, Cloud Computing, Blockchain, and the dawn of 5G connectivity will push the IoT movement along with the use of advanced sensors. Increased computing capacities will also contribute to data processing functionalities. However, the stakeholders in the industry ranging from vendors to maintenance personnel will have to cope with the advances in the technological aspects. The Internet of Things is also expected to be backed by academic institutions by covering it in the syllabi for creating a skilled workforce.

The author would also like to discuss the threats associated, which would pose a danger for the users. If the security provided isn’t adequate, ransomware attacks, stealing user information, altering device settings to provoke hazardous conditions are some of the worst-case scenarios. Hence, the vendors shall be conscientious about the security aspects along with the legal repercussions.

Your Piece of the Pie

A lot of new facilities and value additions to the existing devices and tools will boost the quality of human life, curb the problems caused due to the dangerous working conditions along with the health problems caused by them. It will find a broad spectrum of business domains, including smart cars to intelligent healthcare that will radically redefine our homes and workplaces alike.

The real potential is yet to be explored since the number of gadgets and tools is increasing day by day in each aspect of life. Be it economic or social backdrops, we will experience cost-optimization going hands in hands with unparalleled convenience, which is a rare phenomenon so far. We can also expect the Internet of Things crossing paths with other advanced technologies such as Artificial Intelligence, Big Data Analytics, and Edge Computing that will add value to their purpose in an exponential manner.

Source: https://factohr.com

How PKI & Embedded Security Can Help Stop Aircraft Cyberattacks

by August 27, 2019 by Alan Grau, VP of IoT, Embedded Systems, Sectigo

On July 30th, the U.S. Department of Homeland Security Cybersecurity and Infrastructure Agency (CISA) issued a security alert warning small aircraft owners about vulnerabilities that can be exploited to alter airplane telemetry. At risk to cyberattack, the aircraft’s Controller Area Network (CAN bus) connects the various avionics systems–control, navigation, sensing, monitoring, communication, and entertainment systems–that enable modern-day aircraft to safely operate. This includes the aircraft’s engine telemetry readings, compass and attitude data, airspeeds, and angle of attack; all of which could be hacked to provide false readings to pilots and automated computer systems that help fly the plane.

The CISA warning isn’t hypothetical, and the consequences of inaction could prove deadly. Airplane systems have already been compromised. In September 2016, a U.S. government official revealed that he and his team of IT experts had successfully remotely hacked into a Boeing 757 passenger plane as it sat on a New Jersey runway, and were able to take control of its flight functions. The year before, a hacker reportedly used vulnerabilities with the IFE (In Flight Entertainment) system to reportedly take control of flight functions, causing the airplane engines to climb.

The Boeing 757 attack was performed using the In-Flight Entertainment Wi-Fi network.

A researcher with security analytics and automation provider Rapid7 wrote about the security of CAN Bus avionics systems in a recent blog and discussed the challenge at this year’s DEFCON security conference. He explained, "I think part of the reason [the avionics sector is lagging in network security when it comes to CAN bus] is its heavy reliance on the physical security of airplanes . . . Just as football helmets may actually raise the risk of brain injuries, the increased perceived physical security of aircraft may be paradoxically making them more vulnerable to cyberattack, not less."

A False Sense of [Physical Access] Security

The DHS CISA warning stated, "An attacker with physical access to the aircraft could attach a device to an avionics CAN bus that could be used to inject false data, resulting in incorrect readings in avionic equipment.” CISA fears that, if exploited, these vulnerabilities could provide false readings to pilots, and lead to crashes or other air incidents involving small aircraft. Attackers with CAN bus access could alter engine telemetry readings, compass and attitude data, altitude, and airspeeds. Serious stuff.

Not all of these attacks required physical access.

These risks should serve as a wake-up call to everyone in manufacturing. Any device, system, or organization that controls operation of a system is at risk, and the threats can originate from internal or external sources. It’s critical for OEMs, their supply chains, and enterprises to include security and identity management at the device level and continually fortify their security capabilities to close vulnerabilities.

Security Solutions for Avionics Devices

Today’s airplanes have dozens of connected subsystems transmitting critical telemetry and control data to each other. Currently, tier-one suppliers and OEMs in aviation have failed to broadly implement security technologies such as secure boot, secure communication and embedded firewalls on their devices, leaving them vulnerable to hacking. While OEMs have begun to address these issues, there is much more to be done.

Sectigo offers solutions so that OEMs, their supply chains, and enterprises can take full advantage of PKI and embedded security technology for connected devices. Our industry-first end-to-end IoT Platform, made possible through the acquisition of Icon Labs, a provider of security solutions for embedded OEMs and IoT device manufacturers, can be used to issue and renew certificates using a single trust model that’s interoperable with any issuance model and across all supported devices, operating systems (OS), protocols, and chipsets.

Much like the automotive industry, the aviation sector has a very complex supply chain, and implementing private PKI and embedded security introduces interoperability challenges. With leading avionics manufacturers introducing hundreds of SKUs per year, maintaining hundreds of different secure boots within a single aircraft is complex, cumbersome, and ultimately untenable. Using a single homogenous secure boot implementation greatly simplifies the model.

Purpose-built PKI for IoT, such as the Sectigo IoT Manager, enables strong authentication and secure communication between devices within the airframe. Using PKI-based authentication prevents communication from unauthorized components or devices and will eliminate a broad set of attacks.

Embedded firewall technology provides an additional, critical security layer for these systems. This is particularly relevant for attacks such as the Boeing 757 attack via the airline Infotainment Wi-Fi Network. An embedded firewall provides support for filtering rules to prevent access from the Wi-Fi network to the control network.

Icon Labs embedded firewall has been has deployed in airline and automotive systems to address attacks such as these. In both instances, our embedded firewall sits on a gateway device in the vehicle or airplane to prevent unauthorized access from external networks or devices into the control network, or from the Infotainment network to the control network. We continue to see interest in this area, indicating manufacturers are beginning to act.

From Cockpits to Control Towers

Securing connected devices in aviation is not limited to airplanes. The industry requires secure communication between everything on the tarmac, from cockpits and control towers to provisioning vehicles and safety personnel. For that reason, Sectigo provides an award-winning co-root of the AeroMACS consortium, which addresses all broadband communication at airports across the world and calls for security using PKI certificates to be deployed into airplanes, catering trucks, and everything else on the tarmac.

Future Proofing with Crypto Agility

It’s worth noting that aviation is also uniquely challenged by the tenure of its components. Unlike devices that are designed to last for months or years, airplanes are designed to last for decades. Advances in quantum computing, which many experts believe is just around the corner, threaten to make today’s cryptographic standards obsolete. Aeronautical suppliers need to be prepared for this coming “crypto-apocalypse” and to update the security on their devices in the field while the devices are in operation. Sectigo’s over-the-air update abilities provide the cryptographic agility to guard against this upcoming crypto-apocalypse (listen to the related Root Causes podcast).

The ecosystem has fast work to do. Manufacturers must secure the CAN buses in their existing, and future fleets – whether those planes idle on fenced tarmacs, or in airplane hangars. In the meantime, CISA counsels that aircraft owners restrict access to planes avionics' components "to the best of their abilities,” leaving passengers to hope security soon extends beyond their TSA experiences.

Read this blog online at https://sectigo.com/blog/how-pki-and-embedded-security-can-help-stop-aircraft-cyberattacks

Let's be honest. Numerous individuals are resistant to technological changes in both their own lives and at the workplace. However, what they regularly need is the vision to perceive how the new technology they are opposing will improve their lives later on.

Blockchain has risen out to turn into the best game-changer for worldwide businesses. Upcoming and budding business people have understood the genuine capability of the blockchain. One of the most excited and discussed technology in the business world right now is Blockchain technology. More than 25 Industry sections have understood the genuine capability of this technology and keenly look forward to relating with the right technology partner.

Bitcoin, and the blockchain technology behind it, didn't disrupt the world as was at first idea when Satoshi Nakamoto published his invention in 2009. More recently, in any case, the blockchain has turned out to be one of the most generally discussed buzzwords, in the payment industry as well as over various industries. Truth be told, some accept that blockchain technology could eventually be more vital than the web.

Industries that are evolving Blockchain Technology

The first application of blockchain technology is digital cash like Bitcoin. The capability of blockchain technology lies in its versatility for a wide assortment of blockchain applications and use cases across many industries. Take a look at industries that blockchain is ready to disrupt:

1. Banking

Ironically banks are currently beginning to grasp blockchain technology, even though cryptocurrencies were first made to wipe out the reliance and trust on monetary intermediaries. Banks play an intermediary to a pack of financial services over the world, and blockchain technology banking will change the idea of numerous daily bank tasks throughout the following decade.

By utilizing blockchain, transferring assets between two parties that are situated on opposite sides of the world work as though they were directly nearby to one another. Blockchain technology in banking could likewise help banks move currency inside their organizations. Banks could build up their own managed cryptocurrencies to replace traditional dollars.

2. Manufacturing

The specialists expressed that the blockchain in the manufacturing business sector is anticipated to be worth around $30 million by 2020, and the market will keep on developing at a yearly development rate of 80 percent, to $566 million by 2025. Other real cryptographic money markets like Japan and South Korea have been empowering the development of blockchain in manufacturing technology and usage of decentralized systems across different businesses.

3. Industry Applications

Since blockchain technology is encrypted and decentralized, it is in effect broadly investigated for building up such platforms to encourage distributed and business communications. Starting today, the tide of time is by all accounts for decentralized and encrypted messaging applications. For example, Telegram, one such encoded application for messaging, is settling the adoption of blockchain industry applications for different purposes.

4. Blockchain in Food Industry

Imagine you could follow the source of your food like a minute or if you could check if the natural products you bought were really natural. This could really occur sooner rather than later, with blockchain technology set to make its debut in the food business.

Blockchain can help in many ways of view through:

Food safety: Blockchain helps in making the food supply chain transparent and furthermore engages the chain to know about any food safety disasters. This is one reason why associations like Unilever and Nestle are thinking about utilizing blockchain technology. By utilizing it, buyers would most likely follow the causes of specific products to prove their credibility.

Preventing Fraud: It would likewise help in preventing fraud if the information gathered is free of any human error. Actualizing blockchain would help in preventing these issues. It would likewise help in distinguishing the offender if a culprit is made.

Simpler and Quicker Payment: Blockchain would accelerate the payment procedure. It would help farmers in selling more and being repaid appropriately as the market information would be readily available. It could likewise prevent the occurrence of retroactive payments and price intimidation.

What is Litecoin? Litecoin is a blockchain-based decentralized digital cryptocurrency same as Bitcoin. Lіtесоіn is an Open source global payment network and рееr-tо-рееr сrурtосurrеnсу software project released under MIT/X11 lісеnѕеѕ which allows non-zero-cost payments in a globe.

Litecoin is similar to Bitcoin having the same encryption technology to create and transfer funds to authorize the transaction. In Litecoin, ledgers store all information and once its transaction is confirmed, it can’t be deleted or even modified. People who are interested in cryptocurrency investment other than Bitcoin and Ethereum, Litecoin will be a popular choice.

Due to availability and lesser price, it is called as “Silver” cryptocurrency and Bitcoin is “Gold” cryptocurrency.

“If Bitcoin is Gold then Litecoin is Silver”

Also Read: What Is Bitcoin And How It Works

Birth of Litecoin

Bitcoin is created by an Ex-Google employee Charlie Lee on October 7, 2011, and got live in a network on October 13, 2011, with the vision of creating a lighter version of Bitcoin. Charlie Lee is one of the top active people on different social media’s and Blogs.

Benefits of Litecoin

Litecoin has fast processing speed likely every block is processed in 2.5 minutes means 4x faster than bitcoin and this is the main goal, to reduce the timing of block confirmation so that more transactions can take place. It clearly means that in a single day 14, 400 Litecoins are being mined. Its algorithm is difficult to crack.

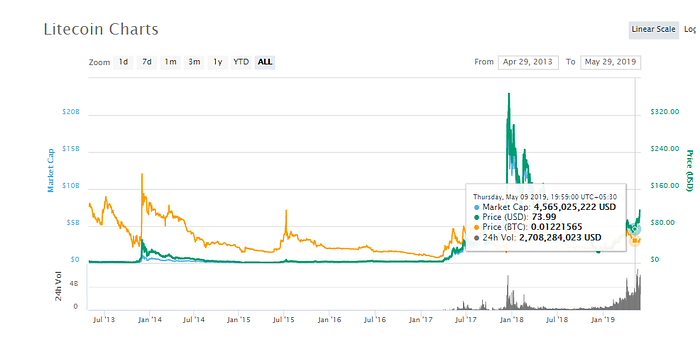

Litecoin is having huge market growth. On December 18, 2017, Litecoin touched too high and it was almost $360.93. As compared to the price in the year 2016, it was $4.40 it means almost 8200% growth in one year.

The Scrypt Algorithm used by Litecoin is difficult to crack due to its proof-of-work model. It confirms a secure and faster transaction. Litecoin is a digital cryptocurrency if we compared the processing fees of Litecoin that is lesser than other bank transfer and credit card.

Litecoin works on Blockchain Technology, so it records all the information and stores it permanently and also confirms the information is distributed to all peers in the network. Litecoin works in p2p connections as a result of no need of middle person or server due to this it reduces the cost of the transaction. The transaction speed of Litecoin is faster as compared to Bitcoin. As a result, a transaction takes lesser time and is secure that is why transaction fees are less and cheaper.

The Difference of Litecoin and Bitcoin

Source: Coindesk

Algorithm:

Both Bitcoin and Litecoin work on the proof-of-work algorithm. But the difference is, Bitcoin uses the SHA256 algorithm whereas Litecoin works on a newer Scrypt Hashing algorithm.

The Number of Coins that Each Cryptocurrency Can Produce:

In Bitcoin, one transaction added in the ledger takes 10 minutes where Litecoin, having the fastest speed to confirm one transaction in 2.5 minutes. A total of 84 million Litecoins will be created and presently 61 million Litecoin is created in Litecoin’s Algorithm and it has a 4-times max capacity than Bitcoin. Due to the faster speed, Litecoin produces max coins as compared to Bitcoin.

A Bitcoin network will exceed a maximum of 21 million coins, whereas Litecoin can billet up to 84 million coins.

The real-world importance of these algorithms are impacted by the process of mining new coins. Both cryptocurrencies need substantial computing power to confirm transactions. SHA-256 is usually reflected to be a complex algorithm than Scrypt, while at the same time allowing a greater degree of parallel processing.

Market Capital:

Bitcoin’s market capitalization is more than $67 billion, whereas Litecoin’s market capitalization is below $3 billion.

Future of Litecoin in Crypto Market:

Litecoin is the fifth-largest cryptocurrency, with a market capitalization of more than $12 billion dollars. The price of Litecoin was always higher in the crypto market

The value of Litecoin saw a committed and increasing dangerous run at the start of 2019. The market capital of Litecoin in the year 2019 originated with 200 percent higher and that also in only 6 months.

Investors who want to trade for longterm in cryptocurrency are having a choice of Litecoin with the goal to acquire max profit.

Litecoin’s price was $4 in March 2017 to a high of $320 in less than a year, providing 80x returns to its investors.

How to Buy or Purchase LTC:

Step 1: Create an account (From any official Website)

Step 2: Verify your identity

Step 3: Get a Litecoin wallet

Step 4: Find an exchange that sells Litecoin

Step 5: Deposit money and make the trade

Facebook has finally discovered plans for a cryptocurrency referred to as Libra, one in all the worst-kept secrets within the history of virtual cash.

On June 18, The Biggest Social media Facebook has released its long-awaited white paper for cryptocurrency and blockchain-based financial infrastructure projects.

Libra, the new cryptocurrency will launch soon in next year and will be known as a stablecoin-Called Libra, the new currency will launch as soon as next year and be what’s known as a stablecoin — a digital currency that is supported by established government-backed currencies and securities. The objective is to avoid fluctuation in values. Libra will be used on facebook owns apps like Whatsapp, Messenger, and other standalone apps. This new digital currency will be linked to a basket of other top currencies like the US dollar and euro and as compared to other currency it will be cheaper and easier to transmit money. As per the latest news the currency plan to launch the Libra In the first half of the next year.

As of now, almost every single person is having a mobile phone but not everyone is having a bank account and the mission of Libra is to create a simple global financial infrastructure to empower billions of people around the world.

Marcus, who runs Facebook Messenger aid Facebook plans to create a replacement digital wallet that will exist within Messenger and its different standalone electronic messaging service, WhatsApp. Once Libra is up and running, the currency and also the digital wallet ought to create it easier for individuals to send cash to friends, family, and businesses through the apps.

If Libra is successful-Libra can make Facebook the biggest player in financial services.- Presently, Including Facebook and its family apps like Instagram and WhatsApp, there are 2.7 billion users and Libra could bring billions of peoples in the digital financial system allowing them to bounce costly banking infrastructure and avoid smaller, volatile currencies.

Facebook made the Libra the Nonprofit association and will be governed by a not-for-profit, Switzerland-based consortium — the “Libra Association” with its other 27 partners and its developers. This partnership involves nonprofit organizations, crypto firms, venture capital firms, telecommunication and technology and payment service providers like Mastercard, PayPal, PayU, stripe and Visa eBay, farfetch, Lyft, Spotify, Uber, Kiva, Mercy Corps and Women’s world banking, Anchorage, Coinbase, Xapo, and Bison Trails.

The total variety of Libra will amendment, and new digital coins will be issued whenever somebody desires to exchange their Libra for an existing fiat currency that the value shouldn’t fluctuate to any extent further than alternative stable currencies, consistent with David Marcus, head of the Facebook blockchain team that’s spearheading the project.

Libra is built in blockchain technology which is decentralized-means it will run by many different organizations instead of just one. It available for those having an active internet connection and has low fees and cost and it is secure by cryptography which helps keep your money safe.